Software Development and Services: What You Should Get

Buying software development and services is harder than buying “code.” You are hiring a delivery system that must turn messy requirements into a reliable product, keep it secure, and keep it running after launch.

If you only receive a pile of pull requests and a few demos, you will pay for it later in rewrites, outages, stalled releases, and vendor lock in. This guide explains what you should expect from professional software development and services, and how to verify it early.

First, define what “good” means for your business

Before you evaluate any team or proposal, make sure you can answer three questions in plain language:

- What outcome are we trying to achieve? (reduce processing time, increase conversion, replace a legacy system, enable self-serve onboarding)

- What must be true in production? (security, performance, reliability, auditability, data retention)

- What has to integrate with what? (identity, billing, ERP/CRM, data warehouse, partner APIs)

This matters because “software development and services” can describe anything from staff augmentation to end-to-end ownership. Without a shared definition of outcomes and constraints, you will compare vendors based on superficial differences like tech buzzwords or UI mockups.

A practical tip: treat non-functional requirements (NFRs) as first-class scope, not “nice to haves.” If you do not define them, you are implicitly accepting whatever defaults the vendor ships.

What you should get, by capability (not by job title)

Many proposals are organized by roles (frontend dev, backend dev, QA, DevOps). Buyers do better when they demand capabilities and proof artifacts.

1) Discovery that reduces uncertainty, not slideware

A competent team should help you narrow risk early, especially around unclear workflows, integrations, and data.

What “good” looks like:

- A short outcome brief (problem, users, success metrics, constraints)

- A prioritized risk list (security, data migration, integration, performance, compliance)

- A thin vertical slice plan (the smallest end-to-end slice that proves feasibility)

If discovery does not produce decisions and testable scope, it is theater.

2) Architecture decisions that are explicit and reversible

You do not need a 40-page architecture document, but you do need clear choices with recorded trade-offs.

Expect:

- A simple system boundary diagram (what is in scope, what stays external)

- Architecture Decision Records (ADRs) for major choices (stack, auth model, deployment, data stores)

- NFR “budgets” (latency targets, throughput expectations, availability goals)

A strong vendor will also explain what they are not building yet, and why.

3) Working software increments, with change safety built in

You are paying for shipping, not “being busy.” Each sprint or iteration should produce a usable increment that can be validated.

Expect:

- A real repository you can access, with a clear structure

- Code reviews that enforce standards (not just approvals)

- Automated tests that run in CI (at least unit + a small end-to-end smoke suite)

- Repeatable environments (local dev, staging) that match production assumptions

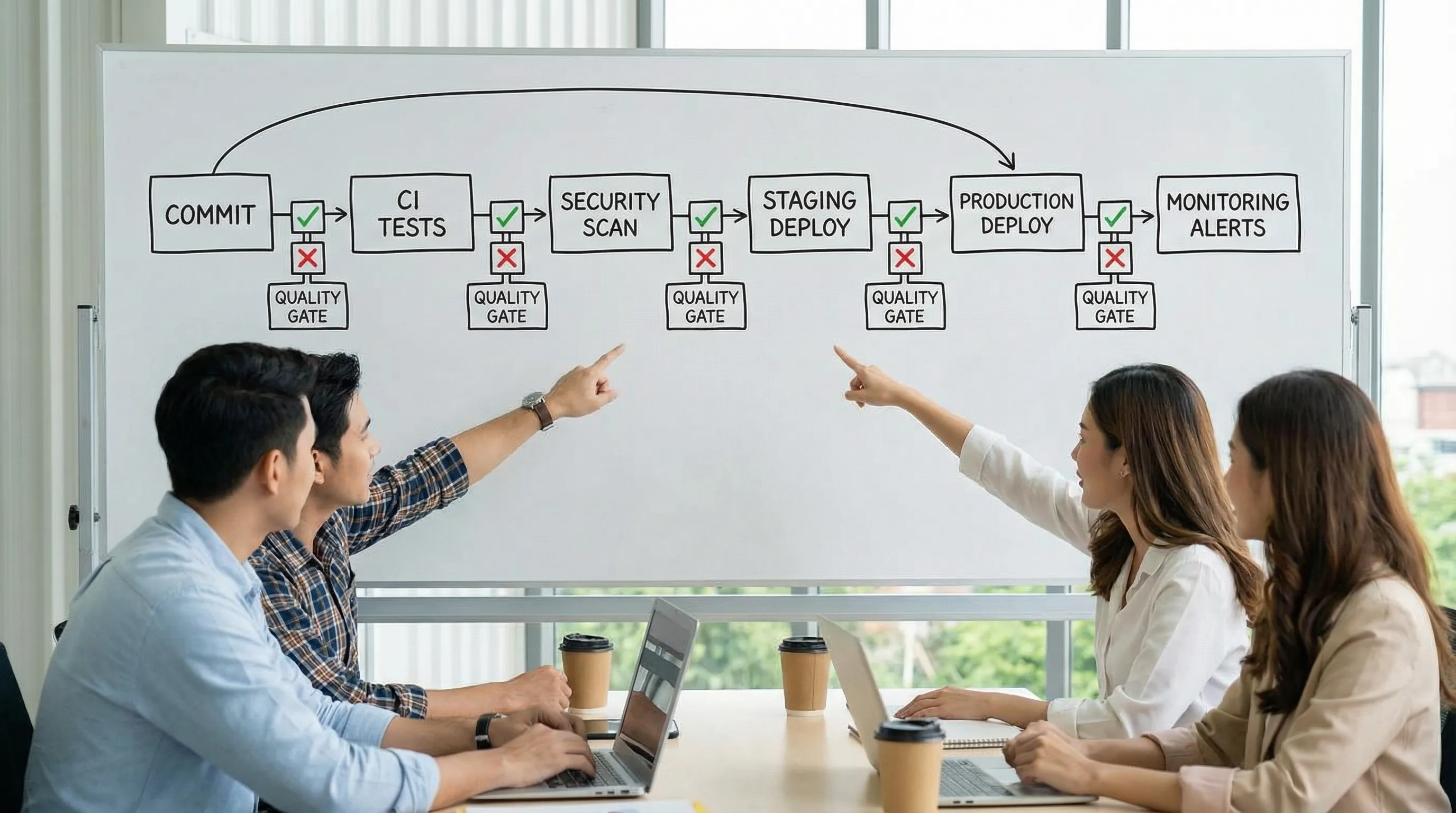

If the vendor cannot show you the build pipeline and test results, you cannot judge delivery risk.

4) Security as a baseline, not a phase at the end

Security is not a checkbox you “do later.” In 2026, buyers should assume supply chain risk, auth mistakes, and data exposure are common failure modes.

Expect:

- A defined security baseline (authn/authz approach, secrets management, secure headers)

- Dependency and vulnerability scanning in CI

- A lightweight threat model for high-risk features (payments, admin, file uploads)

For shared terminology and coverage, it is reasonable to map practices to common references like the OWASP Top 10.

5) DevOps and operability you can measure

“Deployed” is not the same as “operable.” Professional services include the ability to detect issues quickly and recover without drama.

Expect:

- Infrastructure as code or documented, repeatable setup

- A basic observability stack (logs, metrics, tracing where needed)

- Production dashboards for core signals

- Alerting that reflects user impact (not noise)

- Runbooks for common incidents

If you care about faster lead time and lower change failure rate, align on delivery metrics early. DORA’s research popularized a practical set of measures (lead time, deployment frequency, change failure rate, MTTR). The public hub at DORA is a helpful reference point for teams.

6) Data and integrations that are contract-first

Integrations are where schedules go to die. You should expect early proof and explicit contracts.

Expect:

- API contracts (OpenAPI/JSON schema or equivalent)

- A plan for versioning and backward compatibility

- Database migration discipline (reviewed migrations, rollback strategy where possible)

- Test environments or mocks for critical third-party systems

If a vendor postpones integration work until “after the UI is done,” you are buying a surprise.

7) Governance that keeps decisions unblocked

A common reason projects stall is unclear decision rights and weak feedback loops.

Expect:

- A predictable weekly cadence (demo, risk review, decisions)

- A visible backlog tied to outcomes (not a random list of tasks)

- A decision log (what was decided, why, and by whom)

- Clear ownership for product, tech, and operations

This is also where you should clarify how AI-assisted coding is handled (IP ownership, code review expectations, security scanning, and policy).

8) Handover, continuity, and “what happens after launch”

If the relationship ends at launch, you will own a system you cannot safely change.

Expect:

- Onboarding documentation (how to run, test, deploy)

- An architecture overview that matches reality

- Access and ownership transfer (cloud accounts, domains, CI, secrets)

- A support model (even if it is “you own it,” define a transition period)

A buyer-friendly checklist: deliverables and how to verify them quickly

Use the table below in vendor conversations. It focuses on evidence you can inspect, not promises.

| Capability area | What you should receive | How to verify (fast) |

|---|---|---|

| Discovery | Outcome brief + risk list + thin-slice plan | Ask for a 1 to 2 page brief and the first slice definition with acceptance criteria |

| Architecture | Boundary diagram + ADRs + NFR budgets | Review 3 to 5 ADRs and confirm trade-offs are documented |

| Delivery | Working increments + CI pipeline | Watch a live walkthrough of CI, tests, and a staging deploy |

| Quality | Automated checks (lint, types, tests) | Open the repo and find the commands and CI status for main |

| Security | Baseline controls + scanning | Ask what runs on each PR (SAST/SCA, secret scan) and what blocks merges |

| Operability | Dashboards + alerts + runbooks | Ask to see production (or staging) dashboards and a sample incident runbook |

| Data & integration | Contracts + migration strategy | Ask for OpenAPI/schema files and one example of contract testing |

| Handover | Docs + access transfer plan | Request a handover checklist and confirm you will own accounts and repos |

Where “software development and services” must adapt to your industry

Some domains require more than generic best practices. For example:

- In regulated environments, you may need audit trails, retention policies, and stricter access controls.

- In energy and procurement contexts, pricing rules, contract terms, and reporting can be the real complexity, not the UI.

If your product touches business energy usage or sourcing, it can help to follow industry perspectives and constraints from organizations representing commercial energy users, such as the BVGE (Bundesverband der gewerblichen Energienutzer), especially when defining requirements that impact procurement workflows and governance.

Red flags that predict disappointment

These patterns show up in failing engagements across industries:

- No testable definition of done, “done” means merged, not shipped or measurable.

- Quality is manual, testing is mostly clicking, CI is slow or optional.

- Security is postponed, auth and authorization are hand-waved until late.

- Integration is deferred, critical third-party dependencies are “Phase 2.”

- No operational story, nobody can explain monitoring, alerting, or rollback.

- Unclear ownership, decisions bounce between meetings and never land.

If you see two or more of these, insist on a short paid proof sprint (often 2 to 4 weeks) that demonstrates delivery, integration, and operability for one thin vertical slice.

How to bake expectations into your SOW (without writing a novel)

You do not need a legal rewrite to get better outcomes. You need a SOW that forces clarity.

Include:

- Acceptance criteria per slice (functional + NFRs that matter)

- Required artifacts (ADRs, API contracts, CI gates, runbooks)

- Access and ownership (repos, cloud accounts, domains, data)

- Security baseline (what scans run, what blocks merges)

- Release and rollback approach (how you reduce launch risk)

If you want templates and deeper playbooks, Wolf-Tech has practical guides on building safer delivery systems, for example the software project kickoff checklist and a milestone-based implementation plan.

Frequently Asked Questions

What is included in software development and services? It should include more than coding: discovery, architecture decisions, implementation, quality gates, security, DevOps, observability, documentation, and a clear handover or support model.

How do I know if a vendor’s “full-stack” claim is real? Ask for proof artifacts: CI pipelines, test strategy, API contracts, deployment approach, observability, and runbooks. Full-stack is demonstrated by end-to-end ownership, not a title.

Should I require a discovery phase before development? Yes, unless the scope is extremely well known. A short discovery that produces a thin vertical slice plan and risks list can prevent expensive rework.

What deliverables matter most in the first month? A working thin slice in a real environment, documented key decisions (ADRs), CI with automated tests, security basics in place, and at least a starter monitoring setup.

How should pricing and timelines be handled for custom work? Favor incremental milestones with clear acceptance criteria and measurable proof. Avoid contracts that only list features without NFRs, integration scope, or operational requirements.

Need a second opinion on a proposal or an existing codebase?

Wolf-Tech provides full-stack development and consulting across code quality, legacy optimization, tech stack strategy, and production readiness. If you want an evidence-driven review of what you are being sold, or you need help turning requirements into a shippable plan, explore Wolf-Tech at wolf-tech.io and start with a focused assessment before committing to a long engagement.