Programming Code: 10 Habits That Prevent Production Bugs

Production bugs rarely come from “bad developers.” They come from normal software pressure: shifting requirements, partial context, coupled systems, and feedback that arrives too late (after users already hit the issue).

The good news is that preventing bugs is less about heroics and more about repeatable habits. The teams that ship reliably tend to write programming code with the same mindset: make intent explicit, reduce batch size, enforce guardrails automatically, and release in a way that’s easy to observe and reverse.

Below are 10 practical habits you can adopt without changing your whole stack.

What “production bugs” actually are (and why they survive testing)

Most production bugs fall into a few buckets:

- Behavior gaps: the code does what was written, but not what users or the business rules require.

- Integration surprises: contracts between services, APIs, and data stores are assumed rather than enforced.

- State and concurrency issues: retries, timeouts, partial failures, and race conditions were not modeled.

- Environment drift: “works on my machine” becomes “breaks in prod” because config, dependencies, or migrations differ.

- Release and operations gaps: changes ship without the telemetry and rollout controls needed to catch problems early.

That’s why “test more” is not a complete answer. You want a system where bugs have fewer places to hide.

Programming code: 10 habits that prevent production bugs

Habit 1: Write down the behavior before you write the code

Before implementation, make the behavior testable in plain language. Not a novel, just enough to eliminate ambiguity.

What this looks like in practice:

- A short outcome statement (who, what, why).

- Concrete acceptance criteria including error states.

- A few example inputs and expected outputs.

- Non-functional constraints when relevant (latency, security, auditability).

This habit prevents “works as coded” bugs, because you are coding against explicit rules, not assumptions.

If you want a more structured approach, Wolf-Tech’s guide on turning requirements into maintainable code is a good companion: Code software basics: turning requirements into maintainable code.

Habit 2: Ship smaller changes than you think you need

Big changes create big risk because reviewers cannot reason about them, tests take longer, and rollback is harder.

Aim for:

- Small pull requests that change one thing.

- Thin vertical slices (UI, API, data, and ops touched together) instead of “layer by layer.”

- Frequent merges to reduce long-lived branch drift.

This reduces production bugs because each change has a smaller blast radius and is easier to validate.

Related reading for teams that need a practical delivery rhythm: Software building: a practical process for busy teams.

Habit 3: Treat boundaries as hostile (and validate there)

Many production bugs are “bad inputs” bugs. The fix is not paranoia everywhere, it is strictness at boundaries:

- Validate request payloads at the API edge.

- Validate events/messages when consumed.

- Validate data coming from third parties.

- Use schema and runtime validation, not just TypeScript types or documentation.

This habit prevents bugs where unexpected values slip into the system and only fail deep in the call chain.

Habit 4: Prefer types that make invalid states unrepresentable

Types are not only for autocomplete. Used well, they remove entire bug classes.

Examples:

- Use enums (or union types) instead of magic strings.

- Model “not loaded / loading / success / error” explicitly instead of booleans.

- Use distinct types for IDs that must not be mixed (UserId vs OrgId), where your language allows it.

Types reduce bugs because they shift discovery from runtime to compile time, and they force you to think about edge cases earlier.

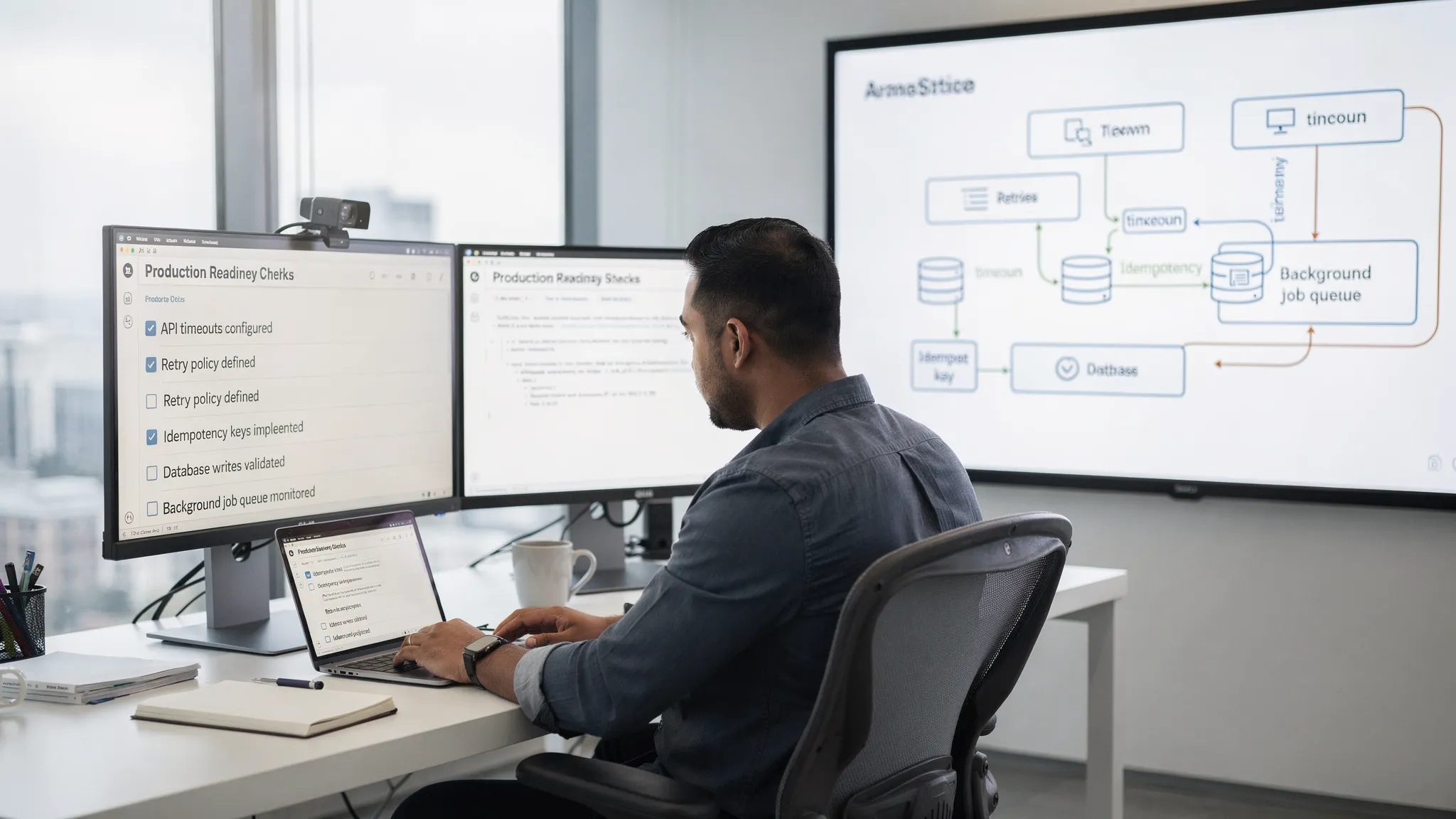

Habit 5: Make failure modes first-class (timeouts, retries, idempotency)

In production, failure is normal: networks flap, dependencies slow down, and clients retry.

A production-safe default set of rules:

- Put timeouts everywhere a call can hang.

- Use retries only when the operation is safe and bounded.

- Make write operations idempotent (so repeating them does not duplicate side effects).

- Treat partial failures as an expected state and design the UX and APIs accordingly.

If you want a deeper reliability-focused framing, see: Backend development best practices for reliability.

Habit 6: Make “done” include automated checks (not intentions)

If a check is important, it should be automated and consistently enforced.

Common high-leverage quality gates:

- Formatting and lint rules

- Static analysis (including type checks)

- Unit and integration tests

- Dependency and secret scanning

This habit prevents bugs caused by inconsistent discipline across developers, repos, or teams.

For JavaScript teams, Wolf-Tech’s checklist is a practical baseline: JS code quality checklist: lint, types, tests, CI.

Habit 7: Review for risk, not style

Code review should answer: “What could go wrong in production?” not “Do I like this variable name?”

High-signal review prompts:

- What are the failure modes and how are they handled?

- What is the rollback plan?

- What could break with real data volume or concurrency?

- Are contracts (API, events, schemas) explicit and compatible?

- Are we leaking sensitive data in logs or responses?

Style should be handled by formatters and lint rules. Human review is too expensive to waste on bracket placement.

Habit 8: Add at least one integration-level proof for critical flows

Unit tests catch logic bugs, but production issues often appear at seams: database queries, serialization, auth, caching, and external dependencies.

A pragmatic approach:

- Pick a small set of critical user journeys.

- Ensure each has at least one integration-level test (or contract test) that runs in CI.

- Keep the suite small and fast enough to trust.

This prevents “everything passed but it still broke” releases.

If you need to balance speed and safety, DORA metrics are a useful lens for improving delivery without slowing down: DORA metrics overview.

Habit 9: Instrument before you ship (and tag releases)

Observability is not optional if you want fewer production bugs. Without telemetry, the bug exists for longer and costs more.

Minimum viable instrumentation for new changes:

- Structured logs with correlation IDs

- Service-level metrics (error rate, latency, saturation)

- Traces for request paths across services

- Release tagging (so you can correlate an error spike to a deployment)

This habit prevents small issues from becoming long incidents because you can detect and diagnose quickly.

Wolf-Tech’s standardization guidance also emphasizes observability early because it reduces coordination cost and production risk: What to standardize first when scaling software development technology.

Habit 10: Release progressively and keep rollback cheap

Many bugs are not obvious until real users and real traffic hit the change. Progressive delivery lets you learn safely.

Practical progressive rollout patterns:

- Feature flags for new behavior

- Canary deployments (small percentage first)

- Blue/green deployments when you need fast cutover and rollback

- Post-deploy verification checks (smoke tests, key metrics gates)

This habit prevents “all users are impacted” incidents and makes recovery faster when something slips through.

A simple “proof” checklist you can copy into PR templates

The goal is not bureaucracy, it is making risk visible.

| Habit | What it prevents | Minimal proof artifact |

|---|---|---|

| Behavior written down | Misinterpretation bugs | Acceptance criteria and edge cases captured |

| Small changes | Large blast radius | PR size stays reviewable, reversible deployment plan |

| Boundary validation | Bad inputs and inconsistent contracts | Schema validation at API/message edge |

| Strong types | Invalid states at runtime | Type checks in CI, explicit domain types |

| Failure modeling | Retry storms, duplicates, hangs | Timeouts, idempotency keys, safe retries |

| Automated gates | Inconsistent quality | CI gates for lint, types, tests, scans |

| Risk-based review | “Looks fine” failures | Review checklist focused on runtime risk |

| Integration proofs | Seam failures | One integration/contract test per critical flow |

| Observability | Undetected issues | Metrics/logs/traces plus release tagging |

| Progressive delivery | Full-scale incidents | Feature flag or canary plan and rollback |

Frequently Asked Questions

Do these habits slow down development? They usually speed it up after an initial adjustment because teams spend less time firefighting, doing hotfixes, and context-switching during incidents.

Which habit should we adopt first? Start with small, reversible changes (Habit 2) and automated CI quality gates (Habit 6). They improve outcomes quickly and make every other habit easier.

How many tests do we need to prevent production bugs? Enough to protect critical journeys and boundaries. A small, trusted integration suite plus targeted unit tests is typically more valuable than a huge flaky test pyramid.

What if we have a legacy codebase with little test coverage? Start by adding characterization tests around high-risk hotspots, introduce CI gates gradually, and refactor incrementally. Wolf-Tech’s legacy-focused guidance can help: Refactoring legacy applications: a strategic guide.

Are feature flags required for progressive delivery? Not always, but they are one of the cheapest ways to reduce rollout risk for behavior changes. For infrastructure-level changes, canary or blue/green may be more appropriate.

Need fewer production bugs without slowing your roadmap?

Wolf-Tech helps teams improve reliability through full-stack development, code quality consulting, and legacy code optimization. If you want a practical assessment of where bugs are entering your system, and a prioritized plan to reduce change failure rate, get in touch via wolf-tech.io.