Software Basics: From Requirements to Maintainable Code

Software teams rarely struggle because they “can’t code”. They struggle because the code doesn’t stay easy to change once real users, real data, and real deadlines arrive.

Maintainable code is not a style preference. It is the practical outcome of turning requirements into:

- clear boundaries (what belongs where)

- explicit contracts (what calls what, and what it guarantees)

- fast feedback (tests, CI, observability)

- disciplined change (small, reversible releases)

This guide covers code software basics for converting requirements into implementation that remains readable, testable, and safe to evolve.

What “requirements” you actually need before writing production code

When teams say “we have requirements,” they often mean a feature list. Feature lists are a weak input for engineering because they hide the details that create long-term complexity.

For maintainability, you want requirements that capture three things:

1) Outcome and users (the “why”)

A requirement should name the primary user and the measurable outcome.

Example:

- “Admins can invite team members so onboarding takes under 2 minutes.”

That sentence already forces better engineering decisions than “Add invite flow,” because it introduces a time budget and a user role.

2) Rules and invariants (the “must always be true”)

Most future bugs are violations of business rules that were never made explicit.

Examples:

- “An invite can be accepted only once.”

- “Invites expire after 7 days.”

- “A user cannot be invited if they already belong to the workspace.”

These rules should become centralized code (domain logic), not scattered across controllers, UI components, and database queries.

3) Non-functional requirements (NFRs) and constraints (the “how it must behave”)

Maintainability is tightly tied to NFRs because they drive architecture and code structure.

Common NFRs that affect your code shape:

- Performance and latency targets

- Reliability expectations (SLOs, error budgets)

- Security and compliance constraints

- Data retention and auditability

If you do not write these down, you will still “implement them,” but by accident and late.

For a practical way to align UX expectations with engineering constraints early, Wolf-Tech describes a repeatable collaboration loop in Web Application Designing: UX to Architecture Handshake.

The translation layer: turning requirements into engineering artifacts

Requirements do not directly become code. They become a small set of artifacts that make intent testable.

Here is a pragmatic mapping you can adopt on most teams.

| Requirement input | Engineering artifact | Why it improves maintainability | “Proof” you can verify |

|---|---|---|---|

| Outcome + user | Thin vertical slice plan | Forces end-to-end thinking and reveals hidden work early | A working slice in a preview or production-like env |

| Business rules | Domain model + invariants | Prevents rules from leaking into random layers | Centralized rule tests; no duplicated validation |

| Data needs | Schema + migration plan | Avoids accidental data models and painful rewrites | Reviewed migrations; constraints; seed/test data |

| Integrations | Contract-first API spec (OpenAPI/GraphQL schema/event schema) | Reduces coupling and clarifies ownership | Contract tests; versioning strategy |

| NFRs | Budgets + SLOs + operability checklist | Makes “quality” measurable and enforceable | CI gates, dashboards, alerts, load test results |

| Risky assumptions | Spike or prototype with exit criteria | Prevents building on unknowns | Decision recorded; measurable result |

If you want a broader delivery lifecycle view, Wolf-Tech’s Build Stack blueprint is a helpful companion, but the table above is the core “requirements to code” bridge.

Start with a shared domain language, then draw boundaries

Maintainable code tends to have one visible trait: it is obvious where a change should go.

That comes from boundaries, not from clever abstractions.

Use the domain language as your folder structure and module seams

Instead of organizing by technical layers only (controllers/services/repos), start by naming business capabilities:

- Billing

- Catalog

- Identity

- Reporting

- Notifications

Inside each capability, you can still use layers, but the top-level intent stays business-first. This reduces “mystery folders,” and it makes onboarding faster.

Prefer a modular monolith until you have proven reasons not to

Many teams reach for microservices because they want boundaries. But you can get strong boundaries inside one deployable by enforcing module rules, ownership, and contracts.

A modular monolith often improves maintainability because it:

- keeps refactors cheaper (no distributed coordination)

- avoids network failure modes

- simplifies local development and debugging

Wolf-Tech covers the decision trade-offs in Software Applications: When to Go Modular Monolith First.

Define contracts before you implement (and treat them as “requirements that compile”)

A large share of maintenance pain comes from implicit assumptions between components:

- what an API returns on edge cases

- how errors are shaped

- how pagination works

- whether retries are safe

Make those assumptions explicit early, and keep them close to code.

Practical contract defaults

- APIs: define endpoints and schemas first (OpenAPI for REST, schema-first for GraphQL)

- Events: define event name, version, payload schema, and consumer expectations

- Data: write down invariants as database constraints when possible (unique keys, foreign keys, check constraints)

For GraphQL-specific pitfalls and mitigations (auth, query cost, N+1), see GraphQL APIs: Benefits, Pitfalls, and Use Cases.

Security is part of the contract

If your requirement says “Only admins can do X,” that is not a UI detail. It is a contract across:

- UI visibility

- API authorization

- audit logging expectations

A widely used baseline is the OWASP Application Security Verification Standard (ASVS), which helps translate “secure” into verifiable controls.

Make requirements executable with tests (the maintainability multiplier)

Tests are not just for catching regressions. Done right, they are your most durable form of documentation.

The goal is not “high coverage.” The goal is confidence per unit of change.

Test the rules where they live

- Business invariants should have fast unit tests.

- Integration boundaries should have contract tests.

- A small number of end-to-end tests should protect the most valuable journeys.

A maintainability anti-pattern is putting business rules only in end-to-end tests, because they become slow, brittle, and hard to debug.

Keep the “definition of done” code-shaped

If you want requirements to become maintainable code, your team needs a minimum bar for what “implemented” means. A pragmatic baseline:

- automated checks run in CI (lint, format, tests)

- code reviewed with a clear checklist

- observability hooks added for critical flows (logs/metrics/traces)

- feature is released safely (feature flags or canary where appropriate)

On the metrics side, the DORA research popularized a set of delivery metrics (deploy frequency, lead time for changes, change failure rate, time to restore) that correlate with software delivery performance. The current home for that work is the DORA site on Google Cloud.

Convert NFRs into code-level budgets and guardrails

Non-functional requirements often fail because they remain “paragraphs.” Paragraphs do not run in CI.

Instead, translate NFRs into budgets and enforce them.

Examples:

- Performance: page LCP budget, API p95 latency budget

- Reliability: timeouts, retry policy, idempotency requirements

- Maintainability: maximum acceptable cyclomatic complexity in hotspots, PR size targets

The quality model in ISO/IEC 25010 is a useful reference vocabulary for attributes like maintainability, reliability, and security, even if you implement them with your own team-specific metrics.

Wolf-Tech’s Code Quality Metrics That Matter goes deeper on choosing metrics that drive outcomes rather than vanity numbers.

The most common failure modes (and how to prevent them)

Failure mode: “Requirements as a checklist”

Symptoms:

- big batch delivery

- late integration surprises

- rewrites after “done”

Prevention: ship a thin vertical slice early with real integration and real telemetry.

Failure mode: Rules scattered across layers

Symptoms:

- bug fixes require touching UI, API, and database code in multiple places

- inconsistent validation messages and edge case behavior

Prevention: define domain invariants once, then reuse them at boundaries.

Failure mode: Contracts emerge from implementation

Symptoms:

- clients break when backend changes “slightly”

- integration work takes longer than feature work

Prevention: contract-first specs and contract tests.

Failure mode: “We will refactor later” becomes “never”

Symptoms:

- slow velocity

- fear-driven development

Prevention: bake refactoring into normal delivery, and use risk controls. Wolf-Tech’s Refactoring Legacy Applications: A Strategic Guide is a practical playbook for doing this incrementally.

A lightweight workflow you can run next sprint

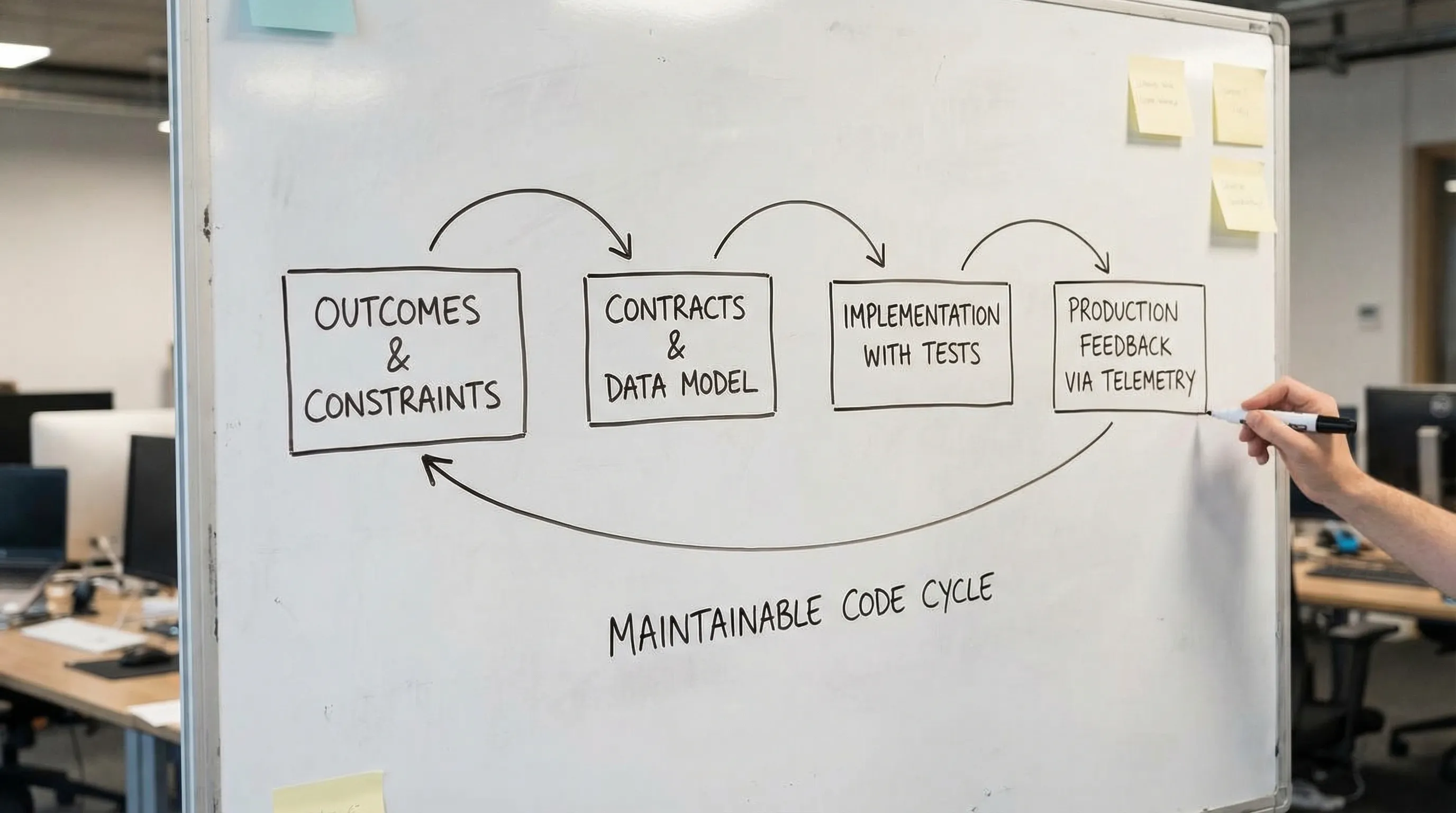

You do not need heavy process to get maintainable code. You need a repeatable loop.

Step 1: Rewrite the requirement into a testable slice

A good slice has:

- a user and an outcome

- a boundary (what is in, what is out)

- acceptance criteria that include at least one NFR

Step 2: Identify the contracts and invariants

Before coding, capture:

- API or event schema

- core data entities and invariants

- error cases and permission rules

This can be a short doc or an ADR, but it must be reviewable.

Step 3: Implement with “maintainability defaults”

Defaults that consistently pay off:

- small PRs

- consistent naming aligned with the domain

- code structured by capability

- automated formatting and linting

Step 4: Prove it works in production-like conditions

That means:

- realistic data volume or representative fixtures

- logs/metrics that show the happy path and failure path

- a rollback or disable strategy (feature flag, revert, or safe degradation)

Step 5: Close the loop with feedback

After release, review:

- did you hit the NFR budgets?

- did support tickets reveal missing requirements?

- did the change increase complexity in a hotspot?

This is how requirements improve over time and how code stays maintainable.

When it makes sense to bring in outside help

If your team is shipping, but maintainability is slipping, you typically need one of these interventions:

- a short architecture and code quality assessment with prioritized fixes

- a legacy optimization plan that avoids a risky rewrite

- a delivery system tune-up (CI/CD, quality gates, operability)

Wolf-Tech provides full-stack development and consulting across code quality, legacy optimization, architecture strategy, Cloud and DevOps, and custom software development. If you want a second set of senior eyes on how your requirements translate into real code, you can explore Wolf-Tech at wolf-tech.io.