Back End Software Developer: Skills That Prevent Outages

Outages rarely come from “one bad line of code.” They come from a chain of small, preventable failures: an unbounded retry that amplifies traffic, a migration that locks a hot table, a missing timeout that turns a dependency wobble into a full incident, or a release that changes an API contract without realizing who depends on it.

A strong back end software developer is valuable not just because they can build features, but because they can ship change without breaking production. This article breaks down the concrete skills that most directly prevent outages, how they show up in day-to-day engineering, and how to evaluate them in hiring or internal growth.

What “prevents outages” in backend work (in practice)

Outage prevention is mostly about reducing blast radius and improving recovery speed, not chasing perfection.

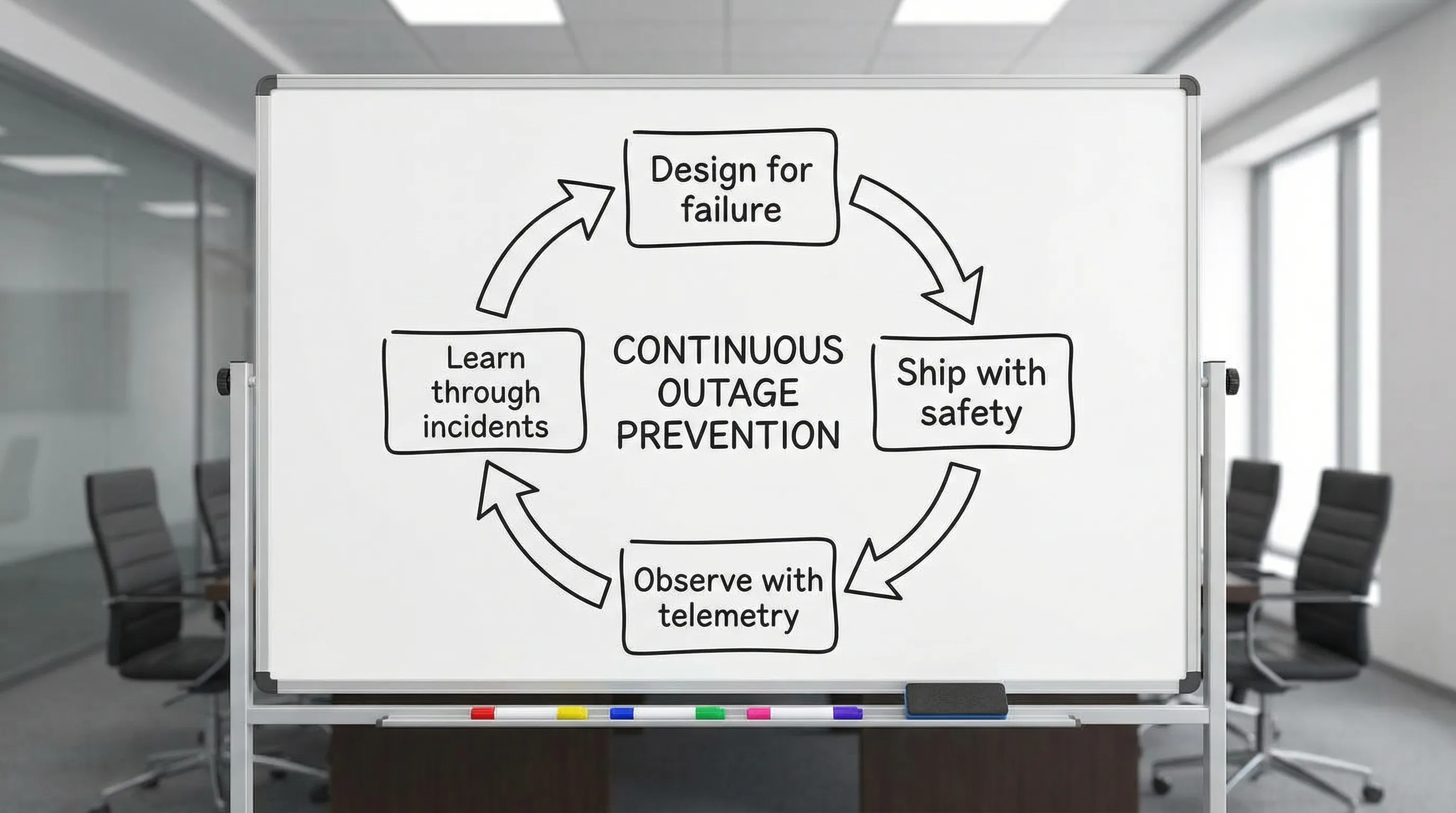

A backend engineer who prevents outages consistently does three things:

- They design for failure (because dependencies, networks, and humans fail).

- They ship with safety (because most incidents are change-related).

- They make systems observable (because you cannot fix what you cannot see).

This is aligned with the SRE view of reliability as an engineering discipline, not a hero activity. If your organization is new to these concepts, Google’s Site Reliability Engineering book is still the best free foundation.

The common outage patterns backend teams keep repeating

Different industries have different risk profiles, but backend incidents tend to cluster into a few recurring patterns.

| Outage pattern | What it looks like | Typical root cause | Prevention skill that matters most |

|---|---|---|---|

| Change-induced regression | Errors spike right after deploy | Missing tests, missing rollout safety, weak contracts | Change safety, contract thinking |

| Dependency failure cascade | One API slows, then everything times out | No timeouts, retries without backoff, no bulkheads | Resilience engineering |

| Database overload or lock contention | p95 latency climbs, then timeouts | N+1 queries, missing indexes, unsafe migrations | Data discipline |

| Queue or async backlog | Jobs pile up, customer actions stall | No backpressure, no DLQ strategy, poor idempotency | Async reliability |

| Capacity cliff | Works until traffic jump, then collapses | No load testing, no limits, poor caching strategy | Performance and capacity thinking |

| Observability gap | “We don’t know what broke” | Missing tracing/metrics, noisy logs, no SLOs | Operational visibility |

If you want a layer-by-layer set of implementation practices, Wolf-Tech also has a companion guide: Backend Development Best Practices for Reliability. This article stays focused on the skills behind those practices.

Skill 1: Failure modeling, knowing how systems actually fail

A backend system is a graph of dependencies: databases, caches, third-party APIs, queues, identity providers, object storage, and internal services. Outages often happen when engineers implicitly assume “happy path” behavior.

A back end software developer with strong failure modeling skills will routinely ask:

- What happens if this dependency returns slowly, not incorrectly?

- What happens if this request is retried?

- What happens if the same message is delivered twice?

- What happens if we deploy version N while some clients still call version N-1?

This skill is less about memorizing patterns and more about consistently thinking in modes: partial failure, degradation, concurrency, and recovery.

What to look for in code

Good failure modeling becomes visible as small, boring decisions:

- Timeouts on outbound calls (and timeouts that are consistent with the end-to-end latency budget).

- Defensive defaults and input validation.

- Bounded resource usage (connection pools, worker concurrency, payload sizes).

- Clear error classification (retryable vs non-retryable).

Skill 2: Resilience patterns that stop small failures from becoming incidents

Most backend outages are not caused by a dependency failing, they are caused by your system failing because a dependency degraded.

A backend engineer who prevents outages understands how and when to apply these patterns:

- Timeouts: every network call must have one.

- Retries with backoff and jitter: and only for safe, retryable operations.

- Idempotency: so retries do not create duplicate side effects.

- Circuit breakers and bulkheads: to stop cascading failure.

- Load shedding and rate limits: to keep the system alive under stress.

The key skill is trade-off judgment. For example, retries can improve success rate, but can also amplify load during an incident. Senior engineers know that resilience without observability can be dangerous, because you can “hide” failures until you hit a cliff.

Skill 3: Data discipline, the database is usually the outage surface

Backend outages frequently involve data issues because data sits at the center of critical paths.

A strong backend engineer treats the database as a first-class production system, not a dumping ground.

The sub-skills that matter most

Schema and constraint thinking. Constraints (foreign keys, uniqueness, not null where appropriate) prevent bad data states that later cause crashes or operational workarounds.

Query literacy. Preventing N+1 patterns, understanding how indexes work, and knowing how to interpret query plans are outage-prevention skills, not “performance tuning.”

Safe migrations. Many incidents are self-inflicted during schema changes. An outage-preventing developer understands:

- Expand and contract migrations for backward compatibility.

- Avoiding long-running locks on hot tables.

- Rollback strategy and verification queries.

If you are modernizing a legacy codebase where database change risk is high, incremental approaches from Refactoring Legacy Applications: A Strategic Guide apply directly, especially when you need to add safety nets before touching core flows.

Skill 4: Contract thinking, backward compatibility over “perfect APIs”

A surprising number of outages are really integration failures: a client expects a field that disappeared, an event schema changes silently, or an endpoint’s semantics drift.

Contract thinking means a backend engineer makes API and event changes as if unknown consumers exist, because they often do.

Practical signs of contract maturity

- Strong typing or schema validation at boundaries (OpenAPI, JSON Schema, protobuf).

- Explicit versioning strategy, or at least a disciplined compatibility policy.

- Consumer-driven contract tests where appropriate.

- Deprecations that are communicated and measured.

This mindset becomes increasingly important as teams scale. Clear boundaries and enforceable contracts are a core theme in Software Systems 101: Boundaries, Contracts, and Ownership.

Skill 5: Observability, turning production into something you can reason about

“Works on my machine” does not prevent outages. Observability does.

A back end software developer who prevents outages designs telemetry along with features, so debugging is fast and the team can detect problems before customers do.

What “good” looks like

- Structured logs with correlation IDs, minimal noise, and security-safe redaction.

- Metrics that track user-centric outcomes (success rate, latency, saturation) and business flow health.

- Distributed tracing for cross-service debugging.

- Actionable alerts tied to SLOs, not arbitrary host metrics.

Open standards help avoid tool lock-in and improve portability. OpenTelemetry is the current baseline for traces, metrics, and logs in many stacks.

Skill 6: Change safety and release engineering (because most incidents follow deploys)

In practice, the most reliable teams are not the ones who deploy rarely, they are the ones who can deploy safely and recover quickly.

This connects to the industry research behind DORA metrics, which correlate software delivery performance with organizational performance. If you need a starting point, Google’s DORA research is a helpful overview.

The release skills that prevent outages

CI quality gates that are trusted. Tests, static analysis, and security checks that run fast and fail for real reasons.

Progressive delivery. Canary releases, feature flags, and automated rollback when key metrics regress.

Reversible changes. Designing features so you can disable them without redeploying.

If your pipeline and release mechanics are a bottleneck, start with a modern baseline like the one described in CI/CD Technology: Build, Test, Deploy Faster.

Skill 7: Incident fluency, calm execution under pressure

Even great engineering does not eliminate incidents. What separates outage-preventing developers is how they behave during and after an incident.

Incident fluency includes:

- Fast triage using symptoms, dashboards, and recent changes.

- Knowing when to roll back, disable a feature, or shift traffic.

- Clear communication, including what you know, what you do not know, and what you are doing next.

- Post-incident learning that results in specific prevention work, not blame.

This is also where good engineering leadership shows up: investing in runbooks, on-call training, and measurable reliability goals.

A practical “skills to evidence” checklist

If you are hiring, coaching, or evaluating a back end software developer, you want evidence, not self-reported expertise.

| Skill | Evidence to look for | Outage class it reduces |

|---|---|---|

| Failure modeling | Designs include explicit failure cases, latency budgets, and dependency assumptions | Cascades, unknown unknowns |

| Resilience engineering | Correct use of timeouts, retries, idempotency keys, circuit breakers | Dependency incidents |

| Data discipline | Safe migrations, query analysis, constraints, load-safe data access | DB overload, lock incidents |

| Contract thinking | Versioning policy, schema validation, contract tests, deprecation plans | Integration failures |

| Observability | Useful traces, meaningful SLIs, dashboards aligned to user journeys | Slow detection, long MTTR |

| Change safety | Feature flags, canary rollouts, rollbacks, tight PRs | Deploy regressions |

| Incident fluency | Runbooks, postmortems with action items, clear comms | Long incidents, repeat incidents |

If you want to operationalize this at the system level, an architecture review that includes operability and delivery evidence is often the fastest path to clarity. Wolf-Tech outlines what that review should cover in What a Tech Expert Reviews in Your Architecture.

How to assess these skills in interviews (without gimmicks)

The best interviews simulate real work: ambiguous context, trade-offs, and production constraints.

Use scenario prompts that force outage thinking

Ask the candidate to talk through scenarios like:

- “A downstream service is intermittently slow. Our API starts timing out and CPU climbs. What do you check first, and what do you change?”

- “We need to add a required field to a heavily used table with minimal downtime. What migration approach do you use?”

- “We want to refactor a critical flow in a legacy system. How do you reduce risk while shipping?”

Strong candidates will naturally bring up telemetry, rollouts, constraints, and failure modes.

Review a small code sample for the right instincts

You do not need a huge take-home project. A short code review exercise can reveal:

- Whether they add timeouts and input validation by default.

- How they structure error handling.

- Whether they think about idempotency for side-effecting endpoints.

- How they log, and whether they avoid leaking sensitive data.

Growing these outage-preventing skills inside your team

If your team is feature-pressured (most are), you can still improve reliability quickly by focusing on leverage.

A pragmatic 30-day plan:

Week 1: Establish a baseline and pick one critical journey

Choose one user journey that would hurt if it failed, then define a small set of SLIs (success rate, latency, and a dependency health signal). Avoid boiling the ocean.

Week 2: Add change safety on that journey

Implement one progressive delivery mechanism (feature flags or canary) and make rollback a standard move.

Week 3: Fix the biggest failure amplifier

Use tracing and metrics to find the highest-leverage amplifier, often missing timeouts, unbounded concurrency, or hot database queries.

Week 4: Turn lessons into standards

Convert what you learned into defaults (lint rules, templates, runbook snippets, CI checks). This is where reliability becomes repeatable.

If you track code health as part of this effort, focus on a small set of actionable signals. Code Quality Metrics That Matter is a good framework for picking metrics that predict outcomes instead of vanity progress.

Frequently Asked Questions

What is the most important skill for a back end software developer to prevent outages? The most consistently high-leverage skill is change safety, the ability to ship and roll back reliably with good observability. Most incidents follow deployments or configuration changes.

Do backend developers need SRE skills? They do not need to be full-time SREs, but they need SRE-adjacent skills: failure modeling, SLO awareness, telemetry, and incident-ready release practices.

How can I tell if someone is strong at backend reliability in an interview? Give them realistic incident and migration scenarios, then look for structured thinking about timeouts, retries, idempotency, telemetry, rollout strategy, and rollback, not just code correctness.

Why do databases cause so many outages? Because many critical paths depend on the database, and data changes are hard to roll back. Lock contention, slow queries, and unsafe migrations commonly trigger cascading timeouts.

Can a small team prevent outages without heavy process? Yes. Start with one critical journey, add basic observability, enforce timeouts, and use feature flags or canaries. Reliability improves most when safety becomes the default.

Need a backend reliability uplift without slowing delivery?

Wolf-Tech helps teams reduce outage risk through full-stack development, code quality consulting, and legacy optimization, with a focus on practical, provable improvements. If you want a clear plan to harden a critical backend journey (telemetry, change safety, data migration risk, and failure mode controls), start here: contact Wolf-Tech.