Software Applications: When to Go Modular Monolith First

Most teams building software applications do not fail because they picked the “wrong” programming language. They fail because they picked an architecture that multiplied coordination costs, slowed shipping, and made production changes risky.

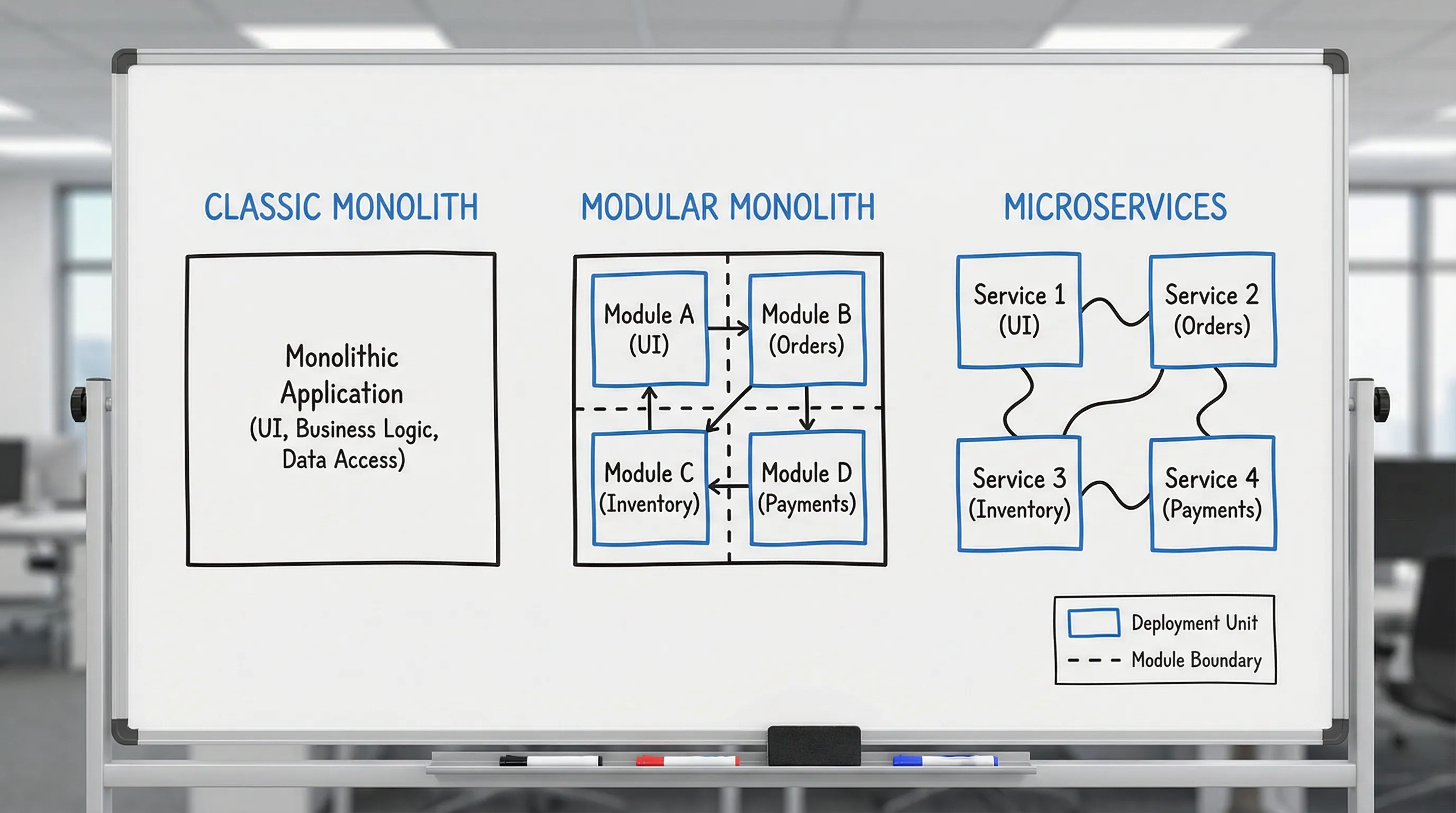

In 2026, microservices are still valuable, but they are also still easy to get wrong. A modular monolith is often the best starting architecture because it gives you many benefits people want from microservices (clear boundaries, independent ownership, change safety) without paying the full distributed-systems tax on day one.

What a modular monolith actually is (and what it is not)

A modular monolith is:

- One deployable unit (often one repository, one runtime, one release artifact).

- Many internal modules with explicit boundaries, clear dependencies, and enforced rules.

- A deliberate architecture, not “a monolith that we promise is modular”.

A modular monolith is not:

- A big ball of mud with folders named “modules”.

- Microservices in disguise running in one process.

- A temporary shortcut before “real architecture”.

The key idea is simple: modularity is a code and team property, not a deployment property. Deployment can stay simple while you learn what your actual domain boundaries should be.

Why “modular monolith first” is a strong default for software applications

Starting with microservices is a bet that you already know:

- Stable domain boundaries

- Data ownership boundaries

- Operational maturity (observability, incident response, SLOs)

- Deployment discipline (CI/CD, safe rollbacks, versioning)

- A team structure that can own services end to end

Early-stage products and even many mature internal platforms simply do not have all of that. A modular monolith lets you:

- Ship a thin vertical slice faster because the system has fewer moving parts.

- Avoid early distributed failure modes (timeouts, retries, partial outages, eventual consistency surprises).

- Keep integration costs low (fewer APIs to version, fewer deploy pipelines to coordinate).

- Delay hard decisions safely while still keeping your codebase organized by business capability.

Martin Fowler has argued for a similar default in his “Monolith First” guidance, largely because service boundaries are hard to discover upfront and expensive to change later (MartinFowler.com).

When to go modular monolith first: a decision lens

The decision is not “monolith vs microservices”. It is:

- What constraints do we have today?

- What risks are we trying to reduce?

- What do we need to prove in the next 3 to 12 months?

Here are the highest-signal situations where a modular monolith is usually the right first move for software applications.

High-signal fit indicators

| Situation you are in | What usually goes wrong with early microservices | Why a modular monolith helps |

|---|---|---|

| You are building an MVP or v1 (uncertain requirements) | Teams lock in premature boundaries and then spend months untangling them | You can evolve modules as you learn, without network and deployment complexity |

| One team (or a small number of engineers) owns most of the system | “Service sprawl” creates overhead without real autonomy benefits | Clear internal boundaries still improve maintainability while keeping ops simple |

| You need faster shipping with safer releases (not “infinite scale”) | Distributed dependencies slow lead time and increase change failure rate | Single deployment reduces coordination, but modularity keeps changes localized |

| Your domain is not well understood yet | Boundaries get drawn around org charts or guesses, not domain seams | You can refactor module boundaries based on real change patterns |

| Your production maturity is still growing | Debugging across services becomes slow without strong observability | One process simplifies tracing and incident triage while you build maturity |

| Data boundaries are unclear (shared entities, reporting needs) | Data duplication and consistency problems appear early | You can keep one database initially while designing eventual ownership |

If your core goal is “make delivery reliable and repeatable,” start by putting the right guardrails in place. Architecture is only one layer of that system. (Wolf-Tech covers this broader capability approach in Build Stack: A Simple Blueprint for Modern Product Teams.)

When modular monolith first is not the right choice

A modular monolith is not a universal answer. Consider starting with microservices (or at least planning for earlier extraction) when most of the following are true:

- Multiple independent teams must ship daily to different parts of the product with minimal coordination.

- Hard isolation requirements exist (regulatory separation, strict tenant isolation, separate data residency zones).

- Highly variable scaling profiles exist that you cannot solve with simple horizontal scaling (for example, one subsystem needs GPU workloads or extreme fan-out).

- You already have a mature platform (service templates, golden paths, SLOs, on-call readiness, standardized observability).

If you are unsure, the safest approach is often to validate with a thin vertical slice and measurable non-functional requirements, rather than committing to a long multi-service build. (Wolf-Tech outlines a practical evaluation approach in Apps Technologies: Choosing the Right Stack for Your Use Case.)

The modular monolith blueprint: how to keep it modular in practice

Most “monolith problems” are not monolith problems. They are boundary problems, dependency problems, and testing problems.

A modular monolith works when you treat modules like products with explicit contracts.

1) Design modules around business capabilities

A reliable default is to align modules to business capabilities or bounded contexts (not technical layers like “controllers/services/repositories”). Each module should own:

- Its domain model and rules

- Its application use cases

- Its persistence details (even if it shares a database at first)

- Its public API (internal contract)

A good smell test: if changing one feature forces changes across 8 folders and 15 unrelated classes, your “module” is not a module.

2) Enforce dependency direction (or the architecture will rot)

“Modular” has to be enforceable. Teams usually need at least one of these:

- Compile-time boundaries (language packages, namespaces, module systems)

- Architecture tests (rules that fail CI when module boundaries are violated)

- Code review checklists (useful but insufficient without automation)

Examples of tooling that can help enforce rules include ArchUnit (Java), dependency-cruiser (TypeScript), and Deptrac (PHP). The specific tool matters less than having a rule that CI can enforce.

3) Be deliberate about your database strategy

A modular monolith does not require one shared schema forever. But starting with a single database is often pragmatic.

A useful middle ground is:

- One physical database

- Separate schemas (or at least clearly owned tables) per module

- “No cross-module joins” as a goal, with documented exceptions

This keeps reporting feasible early while still pushing you toward real ownership boundaries.

4) Define module contracts like you would define service contracts

If you expect a module might become a service later, treat its boundaries as if it were remote already:

- Use explicit commands and queries (or use-case interfaces)

- Avoid reaching into other modules’ internals

- Prefer publishing domain events internally (even if it is in-memory at first)

This approach makes extraction much less painful if you later decide microservices are justified.

5) Make “operability” a first-class module concern

The fastest way to hate your architecture is to ship without operational clarity.

Even in a monolith, you should aim for:

- Structured logs with correlation IDs

- Metrics for latency and error rates on key use cases

- Traces for expensive paths

- A small set of SLOs tied to real user journeys

If you want a practical, engineering-first reliability baseline, Wolf-Tech’s checklist-oriented approach in Backend Development Best Practices for Reliability maps well to monoliths and services alike.

Modular monolith vs microservices: trade-offs that matter for software applications

Architecture debates often get stuck on scale and ignore delivery reality. Here is a pragmatic comparison focused on what teams actually feel.

| Dimension | Modular monolith | Microservices |

|---|---|---|

| Deployment complexity | Low, one release train | High, many pipelines, coordinated rollouts |

| Local development | Usually straightforward | Often requires mocks, local stacks, service emulators |

| Failure modes | Mostly inside one runtime | Network, partial outages, retries, timeouts, cascading failure |

| Data consistency | Easier to keep strong consistency | Often requires eventual consistency and careful patterns |

| Team autonomy | Possible with good modularity and governance | Stronger autonomy when org and platform maturity exist |

| Observability needs | Moderate | High (distributed tracing becomes mandatory) |

| Cost and overhead | Lower baseline overhead | Higher baseline overhead, can pay off at larger scale |

A key takeaway: microservices can reduce coordination in large orgs, but they increase coordination inside the system. You want to “buy” that trade only when you truly need it.

How to evolve a modular monolith into microservices (without a rewrite)

“Modular monolith first” is not “monolith forever”. It is a sequencing strategy.

The cleanest extraction path is:

Start by proving boundaries inside the codebase

Before you cut the network, prove that modules are real:

- Changes are localized to one module most of the time

- Interfaces are stable and documented

- Dependency rules hold under CI

Add integration patterns that survive extraction

Two patterns repeatedly reduce pain:

- Outbox pattern for reliable event publication (prevents “DB write succeeded but event publish failed”).

- Contract testing between modules (and later between services) to prevent breaking changes.

Wolf-Tech covers incremental modernization patterns like strangling and safe rollout mechanics in Modernizing Legacy Systems Without Disrupting Business.

Extract only when there is a measurable reason

A safe trigger is not “this module is big”. A safe trigger is usually measurable:

| Extraction trigger | What to measure | What to extract |

|---|---|---|

| Independent scaling need | CPU, memory, queue depth, p95 latency for a subsystem | A workload-heavy module (for example, media processing) |

| Release bottleneck | Lead time, change failure rate, deployment coordination cost | A module with frequent changes and clear ownership |

| Reliability isolation | Incident blast radius, error budget burn | A module that causes repeated platform-wide incidents |

| Security and compliance boundary | Audit scope, data residency requirements | A module with stricter controls than the rest |

If you cannot articulate the reason in metrics or risk, you are likely paying the distributed tax too early.

Common modular monolith failure modes (and how to prevent them)

“It’s modular in theory, but not in code”

Symptoms: circular dependencies, shared utility dumping grounds, “just this one shortcut” access to internals.

Fix: enforce dependency rules in CI and delete or quarantine shared “commons” packages unless they are truly stable primitives.

“One database table to rule them all”

Symptoms: everything depends on the same core tables, migrations are scary, reporting queries join across the entire domain.

Fix: define data ownership per module and treat cross-module data needs as published views, read models, or events.

“We cannot refactor boundaries because releases are risky”

Symptoms: fear-driven development, long-lived branches, huge PRs, rollback is manual.

Fix: invest in delivery safety (CI quality gates, small changes, feature flags, observability). If you want a concrete checklist for delivery proofs and guardrails, see Custom Software Application Development: End-to-End Guide.

“We picked microservices because we were afraid of the monolith”

Symptoms: many tiny services, unclear boundaries, slow dev environments, high on-call burden.

Fix: treat architecture as an outcome-driven decision. If you are already in this state, consolidating into a modular monolith is sometimes the fastest way to regain delivery speed.

A practical call: choose the architecture that matches your next 12 months

For many software applications, the best architecture is the one that lets you:

- Validate value quickly

- Ship safely and repeatedly

- Keep code changeable as you learn

- Grow into more complexity only when the business demands it

A modular monolith is often the most honest way to do that.

If you want a second opinion before committing (or you need help turning a messy monolith into a truly modular one), Wolf-Tech provides architecture reviews, legacy code optimization, and full-stack delivery support. A good starting point is an evidence-based review of boundaries, delivery safety, and operability, as outlined in What a Tech Expert Reviews in Your Architecture.