Custom Software Services: What You Should Get by Default

Most “failed” custom software projects don’t fail because the team can’t code. They fail because basic expectations were never made explicit: what “done” means, what security baseline applies, who owns the environments, what gets documented, and how quality is enforced.

If you’re buying or leading custom software services, you should not have to negotiate these fundamentals each time. A serious provider should bring them by default.

This guide lays out what that default should include, so you can align stakeholders, compare vendors fairly, and protect your timeline and budget.

What “custom software services” really includes (beyond writing code)

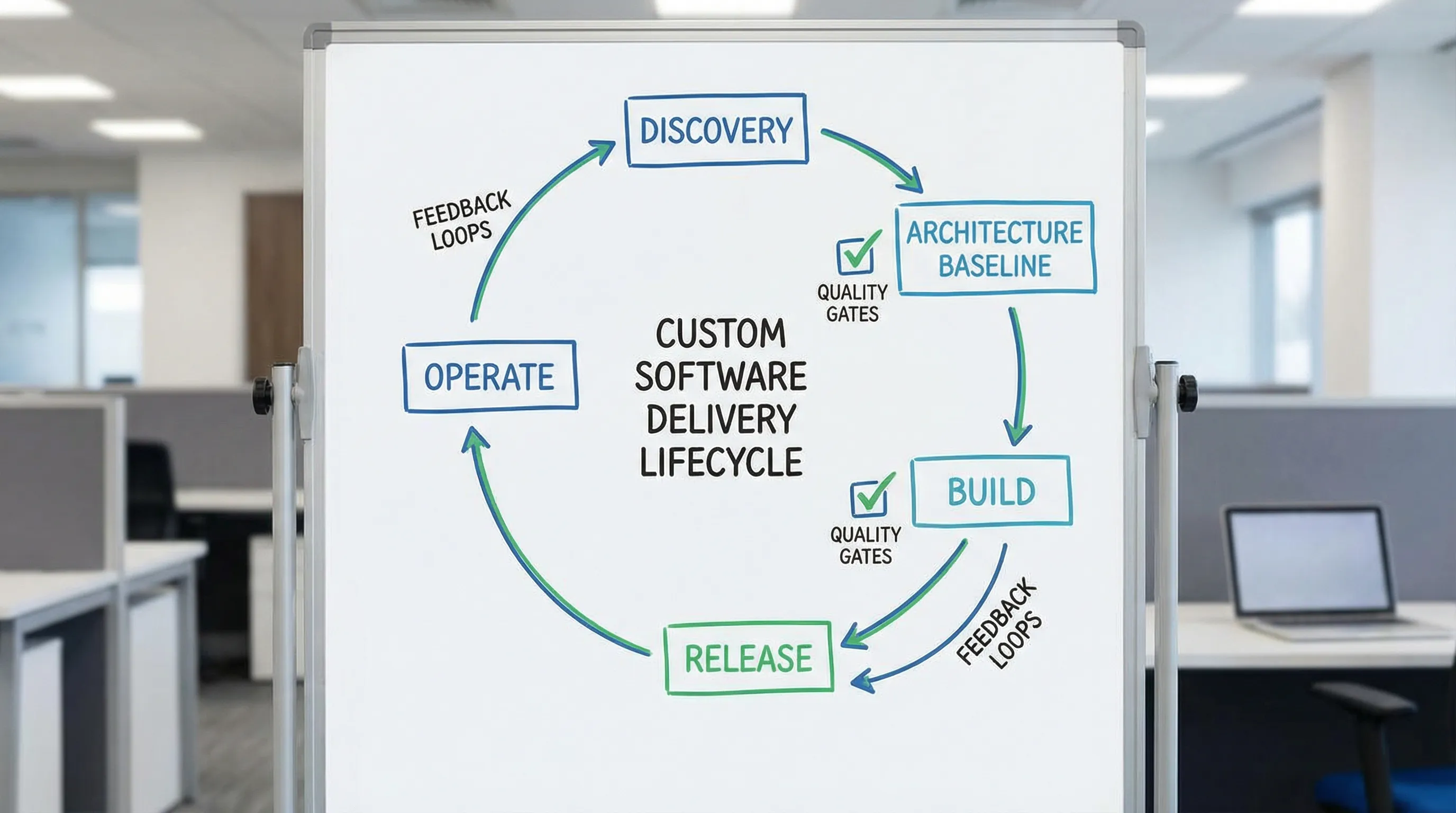

Custom software services usually span more than feature delivery. In practice, you are buying a capability to discover, build, ship, and operate software safely.

A good way to sanity check scope is to map your engagement to the primary job to be done:

| Engagement type | Primary job to be done | What success looks like |

|---|---|---|

| New build (MVP or v1) | Prove value fast, without painting yourself into a corner | A thin vertical slice in production, measurable outcomes, safe iteration |

| Modernization | Reduce risk and change friction in a live system | Incremental improvements with minimal disruption, reliability preserved |

| Extension / scale | Add capabilities while protecting performance and delivery speed | Stable architecture boundaries, predictable releases, operability improved |

| Rescue / stabilization | Stop incidents and regain delivery control | Visibility, tests, CI/CD, prioritized fixes, reduced change failure rate |

Regardless of which bucket you’re in, the “by default” items below should show up.

The baseline deliverables you should get by default

These are the items that prevent the most expensive category of surprises: late rework, security gaps, “it works on my machine,” and knowledge trapped in a few heads.

1) Outcome and scope clarity (a short discovery package)

Even if you arrive with requirements, you still need a shared definition of success.

By default, expect:

- A concise outcome brief (business goal, users, workflows, constraints)

- A prioritized scope with explicit non-goals

- Key non-functional requirements (performance, reliability, security, compliance, data retention)

- A risk list (unknowns, assumptions, integration constraints) and how the team will de-risk them

If a vendor skips this and jumps straight to estimates, your estimate is likely a guess.

2) Architecture baseline and decision records

You do not need a “perfect” architecture upfront. You do need a coherent baseline that supports incremental delivery.

By default, expect:

- A system context diagram (boundaries, integrations, identity, data stores)

- A first-pass data model (entities, lifecycle, ownership, audit requirements)

- A deployment view (environments, regions, critical dependencies)

- Architecture Decision Records (ADRs) for decisions that are hard to reverse

This is also where teams should explicitly choose an approach that matches risk. Many products benefit from starting simpler (often a modular monolith) and evolving as constraints prove out.

3) A delivery system (CI/CD) you can inspect

Custom software services that cannot reliably ship are not “services,” they are a prototype factory.

By default, you should get:

- A source-controlled repo you can access (with clear ownership)

- Automated builds and tests on every change

- Repeatable deployments (at least to staging, ideally production with guardrails)

- A release strategy that reduces blast radius (feature flags, canary/blue-green where appropriate)

If you want a deeper primer on what “good CI/CD” entails, Wolf-Tech has a dedicated guide on CI/CD technology.

4) Quality gates that are enforced, not hoped for

A professional team should treat change safety as a default requirement.

By default, expect:

- Code review standards (PR size limits, required reviewers, review checklist)

- Automated linting/formatting

- Testing strategy (unit, integration, contract tests where needed)

- A definition of done that includes reliability and security basics

This is not about chasing vanity metrics. It is about preventing predictable regressions. If you want a pragmatic view on what to measure, see Wolf-Tech’s article on code quality metrics that matter.

5) Security baseline (from day one)

Security cannot be a “phase at the end.” The minimum baseline should be explicit and auditable.

By default, expect alignment to widely accepted practices such as:

- OWASP guidance for web risks (start with the OWASP Top 10)

- Secure development lifecycle controls (for example, NIST SSDF)

Practical defaults include:

- Authentication and authorization approach documented (including roles/permissions)

- Secrets management (no secrets in code or CI logs)

- Dependency vulnerability scanning and patch policy

- Basic threat modeling for high-risk workflows (payments, PII, admin actions)

6) Observability and operational readiness

If you cannot see what the system is doing, you cannot operate it.

By default, expect:

- Structured logging and correlation IDs

- Metrics for key flows (errors, latency, throughput)

- Alerting for user-impacting failures

- Runbooks for common incidents

A strong provider will also help you define “what good looks like” using service-level thinking (SLIs/SLOs). Google’s SRE resources are a solid reference point.

7) Documentation and knowledge transfer

Documentation is not a vanity deliverable, it is an exit strategy and a scaling tool.

By default, expect lightweight, maintained docs such as:

- How to run locally, test, and deploy

- System overview and key data flows

- Environment setup and access notes

- Operational notes (backup/restore, key jobs, scheduled tasks)

This should be paired with a real handover process, not a single meeting at the end.

The “default” quality gates that protect delivery speed

You should be able to ask, “How do you prevent regressions as the codebase grows?” and get a concrete answer.

Here’s a practical mapping of common gates to the risk they reduce:

| Default gate | What it prevents | What to ask for as proof |

|---|---|---|

| CI on every change | Broken main branch, surprise integration issues | Recent pipeline runs, failure rate, typical duration |

| Automated tests (right-sized) | Regressions, fear-driven development | Test scope, examples, flaky test handling |

| PR review checklist | Security and reliability mistakes, inconsistent patterns | Review template, sample PRs |

| Static analysis (lint/type checks) | “Small” bugs that compound over time | Configs and ruleset choices |

| Dependency scanning | Shipping known vulnerable packages | Tooling used and patch workflow |

| Release guardrails (flags/canary) | High-blast-radius incidents | How rollbacks are handled in practice |

If a provider frames these as “optional extras,” treat that as a signal that you will pay for them later, usually during an incident.

What you should get by default for production readiness

A useful rule: if the vendor cannot describe how they make software safe to run, they are not done.

Production readiness does not mean “enterprise heaviness.” It means basic operability.

By default, expect the team to cover:

- Environments: dev/staging/prod boundaries, configuration strategy

- Data: migrations, backups, recovery expectations (RPO/RTO if relevant)

- Performance: at least a baseline load and latency target for critical flows

- Reliability: timeouts, retries, idempotency where needed

- Security: least privilege access and audit trails for sensitive actions

Wolf-Tech often frames this as shipping a “thin vertical slice” that is production-grade early, a concept also emphasized in their software building process guide.

Commercial and governance defaults (so you do not get trapped)

This is not legal advice, but these are practical expectations that reduce friction and risk.

Clear delivery shape

By default, you should be able to see:

- What will be delivered first (and why)

- How change requests are handled

- How progress is measured (working software, not slide decks)

Many teams use lightweight, outcome-based milestones with demoable increments. If you prefer a checklist-based approach for early delivery, Wolf-Tech has an MVP checklist.

Ownership and access

By default, you should have clarity on:

- Code and artifact ownership

- Access to repos, CI/CD, and cloud accounts (or a documented shared model)

- What happens at the end of the engagement (handover, continuation, or transition)

A strong provider is comfortable with an explicit exit plan.

Reporting that helps decisions

Status updates should not be “green by default.” They should surface:

- What shipped

- What is blocked

- What risks increased or decreased

- What decisions you need to make

When “custom software services” are missing the basics (common red flags)

You do not need perfection, but you do need seriousness.

Watch for:

- Estimates provided without clarifying non-functional requirements

- No mention of CI/CD, tests, or release safety until late in the project

- “We’ll secure it at the end” or vague security assurances without a baseline

- Reluctance to show recent work artifacts (sanitized PRs, pipeline runs, runbooks)

- Documentation treated as “nice to have” rather than part of done

If you want a more formal evaluation approach, Wolf-Tech also publishes a detailed guide on how to vet custom software development companies.

A simple one-page “defaults” checklist you can copy into your next kickoff

If you want to operationalize this article, paste the following headings into a doc and fill them in during discovery:

- Outcomes (measurable)

- Scope (in) and non-goals (out)

- Non-functional requirements (performance, reliability, security, compliance)

- Architecture baseline (boundaries, data, integrations)

- Delivery system (CI/CD, environments, release strategy)

- Quality gates (tests, reviews, static checks)

- Security baseline (standards, threat model notes, scanning)

- Observability (logs, metrics, tracing, alerts)

- Operational readiness (backups, migrations, runbooks)

- Ownership and access (code, infra, credentials)

- Handover plan (docs, pairing, final review)

This turns “what you should get by default” into a shared agreement that prevents late surprises.

Frequently Asked Questions

What are custom software services, exactly? Custom software services include discovery, design, engineering, delivery (CI/CD), and operational readiness, not just writing code. The goal is reliable software you can evolve.

Should security really be included by default? Yes. A minimum security baseline (secure auth, secrets handling, dependency scanning, and alignment with common guidance like OWASP) should be part of normal delivery.

Do I need CI/CD for a small custom project? You may not need complex infrastructure, but you do need repeatable builds, automated checks, and a reliable way to deploy. Otherwise, every release becomes a risky manual event.

How do I compare two vendors using these defaults? Ask each vendor to show evidence: example ADRs, a sample pipeline run, a definition of done, testing approach, and how they handle releases and rollbacks. Favor proof over promises.

What if I already have an internal team and just need help? You can still require these defaults. In that case, “custom software services” may look like code quality consulting, modernization, delivery system improvements, or architecture reviews that raise your baseline.

Need a second opinion on what your project should include by default?

Wolf-Tech provides full-stack development services, code quality consulting, and legacy code optimization, with experience across modern stacks, cloud/DevOps, and data/API engineering.

If you want help turning the checklist above into a concrete delivery plan, or validating whether a proposed scope is missing critical defaults, you can learn more at Wolf-Tech or start by exploring their practical guide to custom software development cost, timeline, and ROI.