Software Technology Stack: A Practical Selection Scorecard

Picking a software technology stack is one of those decisions that feels reversible until it isn’t. Once a product has real users, real data, and real uptime expectations, the stack becomes intertwined with hiring, delivery speed, security posture, cloud costs, and even how your teams collaborate.

The problem is that most “stack decisions” are made with the wrong inputs: popularity, personal preference, or a half-read benchmark. A better approach is to treat the stack as an operating choice and evaluate it with the same discipline you would apply to a vendor contract or an architecture review.

This article gives you a practical software technology stack selection scorecard you can reuse across projects. It’s designed for CTOs, tech leads, and product leaders who want a defensible decision, plus a clear plan to validate it quickly.

What a selection scorecard actually does (and what it doesn’t)

A stack scorecard is a structured way to compare options against your constraints. It helps you:

- Make trade-offs explicit (speed vs compliance, simplicity vs flexibility, etc.)

- Replace opinions with measurable checks and proof artifacts

- Document why you chose a stack, so the decision survives team changes

A scorecard does not promise a universally “best” stack. It’s a decision tool that makes sure you pick something you can ship, secure, and operate for the next 12 to 36 months.

If you want deeper guidance on stack choice beyond the scorecard, Wolf-Tech has related decision guides you can cross-reference, like How to Choose the Right Tech Stack in 2025 and Web Development Technologies: What Matters in 2026.

Step 1: Create a one-page “stack context pack”

Before you score anything, write down the minimum context that changes the decision. Keep it to one page so it stays usable.

Include:

- Product shape: public marketing site, B2B dashboard, mobile-first, API platform, internal tooling, real-time collaboration, etc.

- Hard constraints: data residency, specific cloud, on-prem requirements, “must integrate with X”, browser support, offline needs

- Non-functional requirements (NFRs): latency targets, throughput, availability, RPO/RTO, security/compliance requirements

- Team reality: current skills, hiring market constraints, on-call maturity, tolerance for operational complexity

- Time horizon: MVP in 8 weeks vs platform you’ll run for 5 years are different games

If you are not used to writing NFRs in measurable terms, it’s worth aligning on reliability and delivery metrics early. The DORA research connects strong delivery performance to organizational outcomes, and it has become a common baseline language for leadership teams (see DORA’s State of DevOps resources).

Step 2: Define your scoring scale (keep it boring)

Use a 1 to 5 scale and define it once so the team scores consistently.

| Score | Meaning | How it should feel in a discussion |

|---|---|---|

| 1 | High risk / mismatch | “We can maybe make it work, but it will hurt.” |

| 2 | Weak fit | “Possible, but we’d be fighting the stack.” |

| 3 | Acceptable | “Works, known trade-offs, manageable.” |

| 4 | Strong fit | “Fits our constraints and execution model.” |

| 5 | Ideal | “Best match with clear proof and low downside.” |

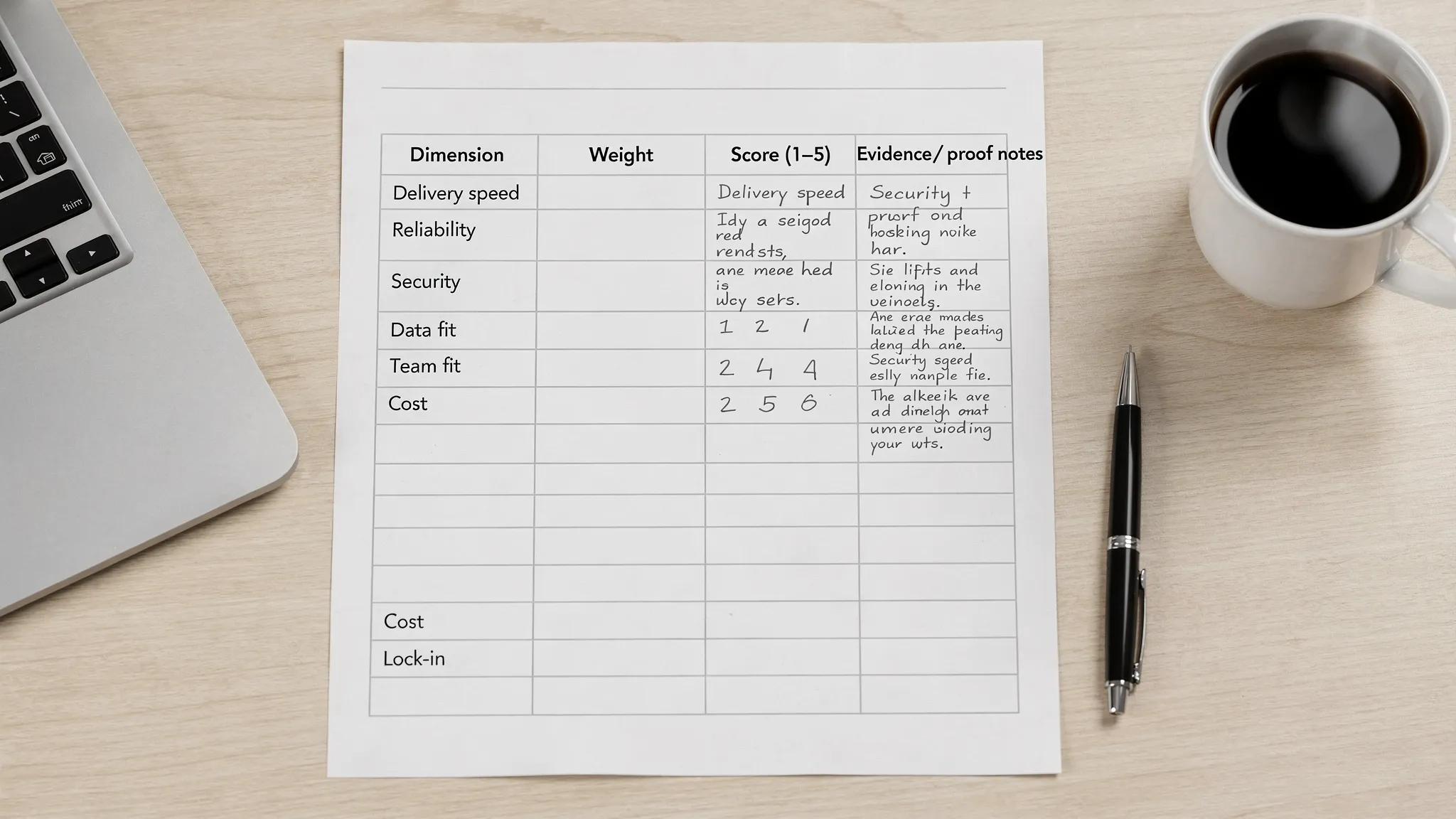

Step 3: Use the practical stack selection scorecard

The scorecard below focuses on what actually drives outcomes in production: change safety, operability, security, data/integration fit, and long-term maintainability.

The scorecard (dimensions, what to check, proof)

Use the “Typical proof” column as your anti-handwaving mechanism. If you cannot produce the proof, you are guessing.

| Dimension | What you are really evaluating | Typical proof (high signal) | Suggested weight |

|---|---|---|---|

| Delivery speed and change safety | How quickly you can ship without breaking production | CI pipeline baseline, test strategy, PR review flow, deploy frequency targets | High |

| Operability and reliability | Can you run it predictably, diagnose issues fast, and recover | Logs/metrics/traces plan, SLOs, runbooks, on-call expectations | High |

| Security and compliance fit | How well the stack supports secure defaults and required controls | Threat model outline, authn/authz approach, dependency scanning plan, audit evidence expectations | High in regulated contexts |

| Data and integration fit | Whether the stack matches your data shape and integration surface | Data model sketch, migration approach, API contracts, event strategy | High |

| Performance and UX constraints | Can you hit user-perceived performance targets realistically | Performance budget, caching strategy, load test plan, Core Web Vitals plan (web) | Medium to high |

| Team fit and hiring | Can your team build and maintain it without heroics | Skills inventory, hiring plan, training cost estimate | Medium |

| Ecosystem maturity | Libraries, tooling, community, and upgrade path clarity | LTS policies, deprecation strategy, upgrade cadence evidence | Medium |

| Cost and infrastructure constraints | Cloud costs, licensing, operational staffing, runtime efficiency | Rough cost model, hosting constraints, FinOps ownership | Medium |

| Optionality and lock-in risk | How reversible the decision is, and what “escape hatches” exist | Boundary decisions (APIs, data portability), ADRs, modular architecture seams | Medium |

Two notes:

- “Suggested weight” is intentionally qualitative. If you want a strict weighted model, assign percentages, but only after leadership aligns on what matters most.

- Don’t overweight “ecosystem maturity” if it’s just another way to say “popular.” Mature ecosystems have stable upgrade paths, boring tooling, and predictable operations.

Step 4: Score only 2 to 4 candidate stacks

If you score 10 options, you’re not deciding, you’re collecting trivia.

A practical candidate set often looks like:

- A “boring default” your team can deliver with today

- A “strategic bet” that improves a key constraint (performance, compliance, hiring, etc.)

- A “constraint-driven” option required by an integration, platform, or enterprise standard

If you are still early, it can help to decide the architectural baseline first (for many teams, a modular monolith is a strong default). Wolf-Tech covers this trade-off in Software Applications: When to Go Modular Monolith First.

Step 5: Add “proof gates” to prevent feel-good scoring

A common failure mode is scoring based on confidence rather than evidence. Fix that by adding proof gates.

Proof gate A: Thin vertical slice

Run a short build that forces end-to-end reality. This is not a prototype UI and not a hello-world API. It’s a small but production-shaped slice.

Your thin slice should include:

- One real user journey (auth included)

- One real piece of data (create, read, update)

- One integration seam (even if stubbed, define the contract)

- CI that builds, tests, and deploys

- Observability hooks (structured logs, basic metrics, error tracking)

Wolf-Tech often recommends thin slices as a de-risking tool because they expose hidden constraints fast (deployment friction, auth complexity, data modeling issues, performance bottlenecks). If you want a structured playbook, see Custom Application Development: From Discovery to Launch.

Proof gate B: Operational readiness mini-check

Before you bless a stack, answer these operational questions in writing:

- How do we deploy safely (rollback, canary, feature flags)?

- What breaks first under load, and how do we know?

- How do we rotate secrets and manage environments?

- What is the minimum observability standard?

If your team wants a deeper reliability checklist by layer, Wolf-Tech’s Backend Development Best Practices for Reliability is a good companion.

Proof gate C: Security baseline

You do not need a full compliance program to evaluate stack security, but you do need a baseline. Two widely referenced sources:

Your stack should make it easy to adopt secure defaults, not require heroic discipline to avoid common footguns.

Step 6: Turn the scorecard into a decision record (ADR)

Once you score and validate, write a short Architecture Decision Record so the decision is explainable later.

A lightweight ADR template:

- Decision: What stack you chose

- Context: The 1-page context pack (or link)

- Options considered: 2 to 4 candidates

- Score summary: Table with dimension scores and the top 3 reasons

- Key trade-offs accepted: What you are consciously giving up

- Proof artifacts: Thin slice repo, pipeline, performance baseline, security notes

- Revisit triggers: What must become true to reconsider (traffic growth, compliance change, hiring constraints, cost thresholds)

This is how you avoid “stack amnesia,” where a team inherits a set of tools with no rationale and can’t tell what is accidental versus intentional.

Common scoring traps (and how to avoid them)

Trap 1: Optimizing for developer happiness while ignoring on-call reality

A stack can feel productive in week 2 and still be expensive in month 9. If incident response and debugging are weak, delivery slows because every change becomes risky.

Countermeasure: require an operability plan and a minimum observability baseline, even for MVPs.

Trap 2: Treating microservices or serverless as “modern by default”

Modern is not an architecture style. It’s the ability to ship safely and operate reliably.

Countermeasure: score operational complexity explicitly. If the team doesn’t have maturity for distributed systems, reflect that in the score.

Trap 3: Confusing benchmarks with user-perceived performance

Most performance problems in real systems come from I/O and data access patterns, not language speed.

Countermeasure: require a performance budget and measure your thin slice. Wolf-Tech’s Optimize the Code: High-Impact Fixes Beyond Micro-Optimizing is a useful mindset shift here.

Trap 4: “We’ll fix security later”

Security debt is costly because it becomes architectural. Authentication boundaries, tenancy, audit logging, and secrets management are not bolt-ons.

Countermeasure: score security/compliance fit high whenever you handle sensitive data, and require baseline controls in the slice.

A practical way to use this scorecard in a real week

If you need an execution-friendly cadence, this is a realistic flow:

- Day 1: Create the context pack, align on weights, pick 2 to 4 candidates

- Days 2 to 3: Score candidates with evidence you already have, identify “unknowns”

- Days 4 to 10: Build a thin vertical slice for the top 1 to 2 candidates

- Days 11 to 12: Re-score with proof, write the ADR, decide

This is fast enough for product timelines and rigorous enough to stand up to stakeholder scrutiny.

When it’s worth bringing in an external reviewer

Teams usually ask for help when:

- The current system is legacy-heavy and the “best” stack is constrained by migration reality

- Compliance and audit expectations raise the cost of wrong choices

- Multiple teams need a standard, but autonomy and delivery speed must remain high

- You need a neutral evaluation to align leadership and engineering

Wolf-Tech supports organizations with tech stack strategy, legacy optimization, and full-stack development across modern stacks, with an emphasis on proof, operability, and long-term maintainability. If you want a second set of eyes, an architecture review format can help you validate assumptions quickly. (Related: What a Tech Expert Reviews in Your Architecture.)

If you’d like, share your context pack and your top two candidates, and we can help you pressure-test the scorecard, define the thin slice, and turn the decision into an actionable delivery plan.