Custom Web Application Development Services: What to Expect

Buying custom web application development services is less like purchasing a finished product and more like commissioning a delivery system. The value is not only “engineers writing code,” but a repeatable way to discover the right scope, build it safely, ship it reliably, and keep it healthy as requirements, traffic, and integrations change.

This guide explains what you should expect from a professional web app development partner, what deliverables to ask for, how timelines typically break down, and how to spot hidden risks early.

What “custom web application development services” should include (end to end)

A serious provider should be able to cover the full lifecycle, even if your in-house team owns parts of it. In practice, custom web app development services usually span:

- Discovery and scoping: clarifying outcomes, users, constraints, and what “done” means.

- UX and product design: flows, states (loading, errors, permissions), accessibility, and content structure.

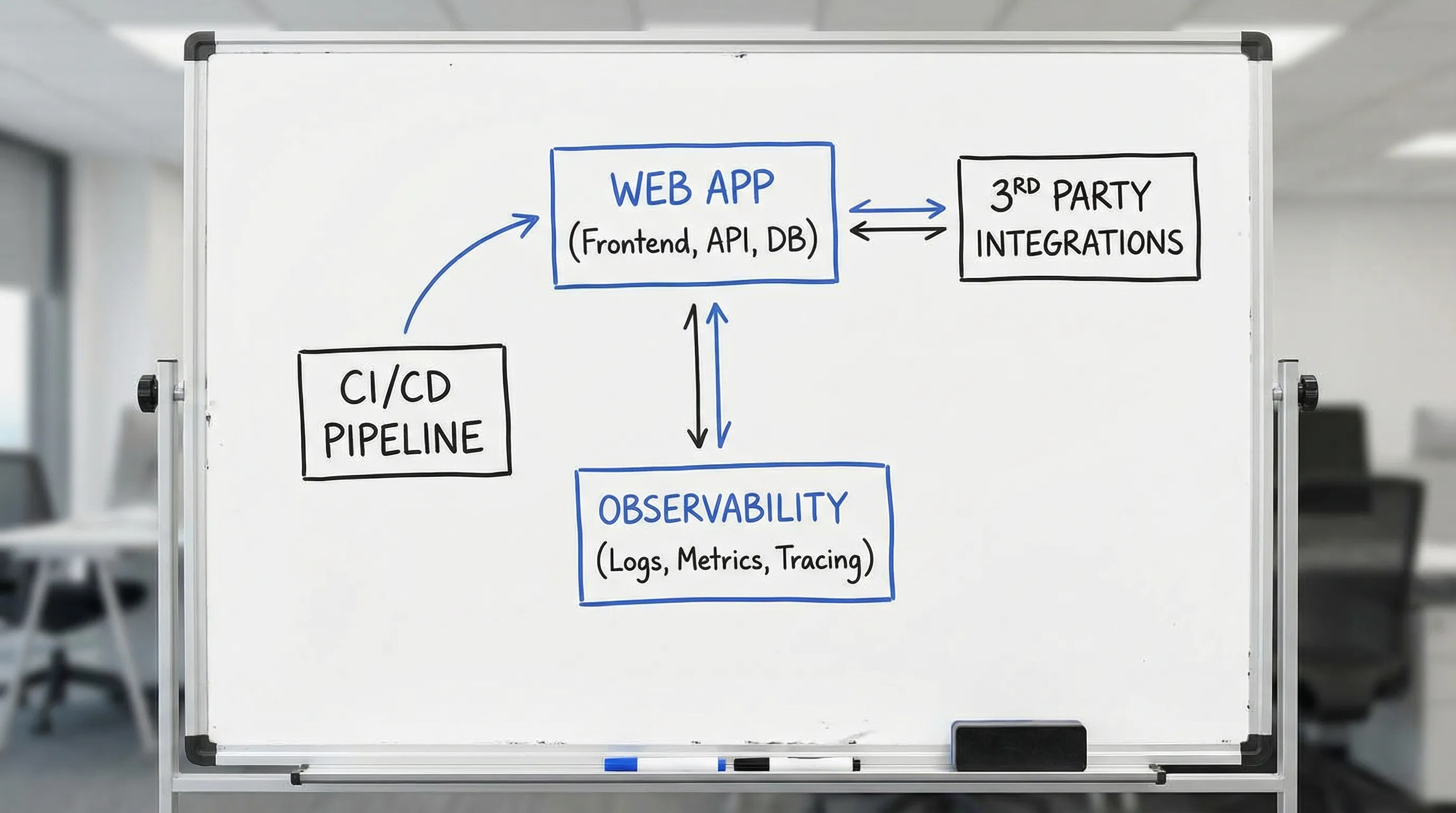

- Architecture and tech stack strategy: frontend, backend/API, data, integrations, and platform choices that fit your constraints.

- Implementation: building features with reviewable increments, not big-bang releases.

- Quality engineering: automated testing strategy, quality gates, and defect prevention.

- Security engineering: threat modeling, secure defaults, dependency hygiene, and release controls.

- Cloud and DevOps: CI/CD, environments, infrastructure as code, deployment strategy, cost guardrails.

- Observability and operations: logs, metrics, tracing, alerting, incident readiness, and performance monitoring.

- Post-launch iteration: improvements driven by usage data, support tickets, and business metrics.

If you want a quick refresher on what technically makes something a “web application” (versus a website), Wolf-Tech already has a strong primer: Web Application: What Is It and How Does It Work?

The phases you should expect (and what you should get in each)

Most web apps fail for predictable reasons: unclear scope, missing non-functional requirements, risky integrations discovered too late, and “we’ll add ops/security later.” A mature engagement makes those risks explicit and handles them in order.

Here is a practical expectation set you can use when comparing vendors.

| Phase | Goal | Typical deliverables you should receive | Your inputs that unblock the work |

|---|---|---|---|

| Discovery and alignment | Agree on outcomes, users, constraints, and measurable success | Problem statement, scope boundaries, prioritized risks, high-level backlog, success metrics | Stakeholders, domain experts, access to existing system docs and constraints |

| UX to architecture alignment | Prevent rewrites by aligning experience and system contracts early | Key user journeys, state model (happy path plus failures), early API sketches, performance budgets | Real workflows, edge cases, compliance needs, brand/accessibility constraints |

| Architecture baseline | Choose a default path that is safe to ship and operate | Architecture diagram, ADRs (decision records), integration plan, data model outline | Current infra constraints, vendor/third-party contracts, security policies |

| Thin vertical slice | Prove feasibility end to end in production-like conditions | Working slice through UI, API, data, auth, CI/CD, basic observability | Test users, environment access, representative data samples |

| MVP build-out | Expand to the smallest complete product | Incremental releases, test coverage growth, operational runbooks, backlog refinement | Prioritization decisions, acceptance criteria, UAT feedback |

| Launch readiness | Reduce blast radius and make release reversible | Release plan, monitoring and alerting, rollback plan, SLOs/SLIs draft | Support ownership, incident contacts, launch comms and training |

| Operate and improve | Keep reliability, cost, and delivery speed under control | Iteration plan, performance fixes, security patching cadence, roadmap support | Usage analytics, support tickets, business KPI feedback |

Two Wolf-Tech articles that go deeper on these mechanics (without fluff) are worth keeping open while you evaluate partners:

- Custom Web Application Development: From Idea to Launch

- Software Project Kickoff: Scope, Risks, and Success Metrics

Deliverables that separate “professional services” from “staff augmentation”

Many teams hire help expecting an app and instead receive a pile of code plus a few Slack messages. Your baseline expectation should be transferable ownership.

A professional partner should leave you with assets that let you continue development with confidence:

Product and decision artifacts

You should expect lightweight, explicit documentation that prevents re-litigating the same arguments every sprint:

- A clear, testable scope statement and success metrics

- A decision log (often via ADRs) for major architectural choices

- A risk register with owners and mitigations

- A definition of done that includes production readiness, not only feature completion

Engineering artifacts

These are the “proof of seriousness” deliverables:

- A repository you can access, with a working build and a predictable structure

- Versioned API contracts (and a clear approach to breaking changes)

- A working CI pipeline that runs tests and enforces quality gates

- Automated tests appropriate to the risk profile (not theater)

- Environment and deployment documentation

If you want a detailed, buyer-oriented checklist of what “good by default” looks like, this Wolf-Tech post is aligned with modern expectations: Custom Software Services: What You Should Get by Default

How quality should be handled (without slowing delivery)

“Quality” is not a single activity, it is a set of constraints that keep changes safe. The best partners treat quality as a delivery accelerant.

Expect quality gates, not heroics

A credible team will proactively implement:

- Code review norms (small pull requests, fast review loops)

- Static analysis and formatting (consistent standards, reduced review noise)

- Test strategy clarity (what is covered by unit tests, integration tests, end-to-end tests)

- Regression prevention (CI that blocks risky changes, plus monitoring to catch escapes)

If you like measuring rather than guessing, it helps to track outcome-linked signals instead of vanity metrics. Wolf-Tech’s guide on code quality metrics that matter is a strong framework for buyer and vendor alignment.

Expect performance to be budgeted and monitored

For user-facing web applications, performance should be treated as a requirement, not a post-launch surprise. Many teams use Core Web Vitals as a practical baseline, especially for public-facing routes. Google maintains the reference documentation for these user-centric metrics: Core Web Vitals.

A good partner will propose performance budgets (for example, bundle size constraints, API latency targets) and add checks to prevent regressions.

How security should show up in the engagement

Security is an engineering discipline, but it is also a procurement and governance topic. You should expect your vendor to discuss security early, in plain language, and tie it to concrete controls.

Baseline expectations for modern custom web applications include:

- Secure-by-default development practices (secrets management, least privilege, safe configuration)

- Dependency and supply chain hygiene (understanding what runs in production and how it is updated)

- Threat modeling for the critical flows (auth, payments, admin actions, data export)

- Release safety (auditability, rollback strategy, and access controls)

Two credible reference points many mature teams align with are:

- OWASP Top 10 for common web app risk classes

- NIST Secure Software Development Framework (SSDF) for a governance-friendly way to organize secure development practices

You do not need to implement everything at once, but you should expect a partner to explain what they do by default, what is optional, and what evidence you will see in the repo and pipeline.

Timeline expectations (and why “it depends” is not good enough)

Timelines vary, but a vendor should still be able to provide ranges and what drives them. If you only get “it depends,” you are missing a planning model.

Common patterns for custom web applications:

- Discovery and alignment: often 1 to 3 weeks for a focused scope, longer if stakeholders are fragmented or requirements are uncertain.

- Thin vertical slice: often 2 to 6 weeks to prove end-to-end feasibility (UI, API, data, auth, deployment) in a production-like way.

- MVP build-out: commonly 6 to 12+ weeks depending on number of roles, integrations, and non-functional requirements.

- Launch readiness: typically overlaps with MVP work, but expect at least 1 to 2 weeks of focused hardening for production readiness if it was not built in from day one.

A practical way to keep schedules honest is to define “done” as shippable increments. Wolf-Tech’s broader process framing in Software Building: A Practical Process for Busy Teams matches how high-performing teams reduce delivery risk.

How collaboration should work (roles, cadence, and decision rights)

A web app engagement is a cross-functional effort. Even if you outsource development, you cannot outsource product decisions.

At minimum, expect clarity on:

Who decides what

Your partner should propose explicit decision rights, for example:

- Product priorities and acceptance criteria (usually yours)

- Architecture guardrails and technical standards (shared, with a clear technical owner)

- Security and compliance constraints (shared with your security/compliance stakeholders)

- Release go/no-go criteria (shared, defined early)

How progress is reported

Status reporting should not be a slide deck, it should be evidence from the delivery system:

- Shipped increments in a staging or preview environment

- A running risk list with mitigations

- Measured delivery and reliability signals (many teams use DORA-style metrics as a starting point, see the research program at DORA)

If you want to pressure-test whether a vendor can run this kind of engagement, Wolf-Tech’s buyer guide How to Vet Custom Software Development Companies offers a practical evaluation flow and the proofs to request.

What to expect around technology choices (and how to avoid a stack driven by fashion)

A partner should never pick a stack because “we always use X.” They should pick it because it fits your constraints over the next 12 to 36 months.

You should expect:

- A clear explanation of the architectural baseline (often a modular monolith early, unless strong reasons push otherwise)

- A plan for integrations and data, including how breaking changes and migrations are handled

- A deployment model and operational assumptions (runtime, caching strategy, observability, cost)

Wolf-Tech has two strong resources you can use as a neutral benchmark during vendor conversations:

- Apps Technologies: Choosing the Right Stack for Your Use Case

- Web Development Technologies: What Matters in 2026

Post-launch: what a responsible partner does not disappear on

A web app becomes “real” when it hits production. That is when operational gaps, user behavior, and data edge cases surface.

At minimum, expect a post-launch plan that covers:

- Monitoring for user-impacting failures and performance regressions

- A patching approach for dependencies and security updates

- A process for handling incidents and support escalations

- A way to prioritize improvements based on real usage data

If your app touches legacy systems, post-launch stability depends heavily on integration seams and safe modernization patterns. Wolf-Tech’s Modernizing Legacy Systems Without Disrupting Business is a good playbook for what “safe change” looks like in real organizations.

Red flags when buying custom web application development services

You do not need perfection, but you should avoid predictable failure modes. Watch for:

- Vague answers about what you will “own” at the end (repo access, infrastructure access, documentation)

- “We will add testing later” or “QA at the end” as the default plan

- No mention of security practices, dependency risk, or release safety

- Architecture decisions made before discovery, UX flows, and constraints are understood

- Big milestones with no intermediate shipped increments

- No operational story (monitoring, on-call expectations, incident handling)

A good partner will welcome these concerns, because addressing them early reduces rework and protects delivery velocity.

Where Wolf-Tech fits

Wolf-Tech focuses on building, optimizing, and scaling web applications with full-stack development expertise, backed by code quality consulting, legacy code optimization, tech stack strategy, and cloud and DevOps experience.

If you are evaluating a build, a rebuild, or a modernization effort, a good next step is to align on expectations and proofs early, before you commit to a long engagement. You can use Wolf-Tech’s existing guides as checklists, then discuss your specific constraints with their team at Wolf-Tech.