Application Development Services: A Buyer’s Checklist

Buying application development services is rarely about “can they code.” It is about whether a team can reliably turn your outcomes into a system you can ship, operate, and evolve without surprises.

This buyer’s checklist is designed for CTOs, product leaders, and procurement teams comparing vendors for custom application development (web, mobile, internal platforms, API-first products, and modernization work). Use it to pressure-test proposals, align expectations early, and prevent the most expensive failure mode: a “working” app that cannot be safely changed.

What you are actually buying when you buy application development services

At a minimum, a vendor will write code. A good partner delivers a repeatable delivery capability: clear scope, measurable quality, production readiness, and decision support when trade-offs appear.

If you are only comparing feature lists and hourly rates, you will miss the costs that show up later:

- Release friction (slow deployments, manual steps, fear of change)

- Operability gaps (no visibility, slow incident response)

- Security and compliance rework (late reviews, missing audit trails)

- Hidden integration complexity (data contracts, identity, third-party limits)

A practical expectation baseline is: the vendor should help you reduce delivery risk early with evidence, not promises. A “thin vertical slice” shipped to a real environment beats a 40-page plan every time.

If you want a deeper end-to-end view of the lifecycle and deliverables, Wolf-Tech’s companion guide is useful: Custom software application development: end-to-end guide.

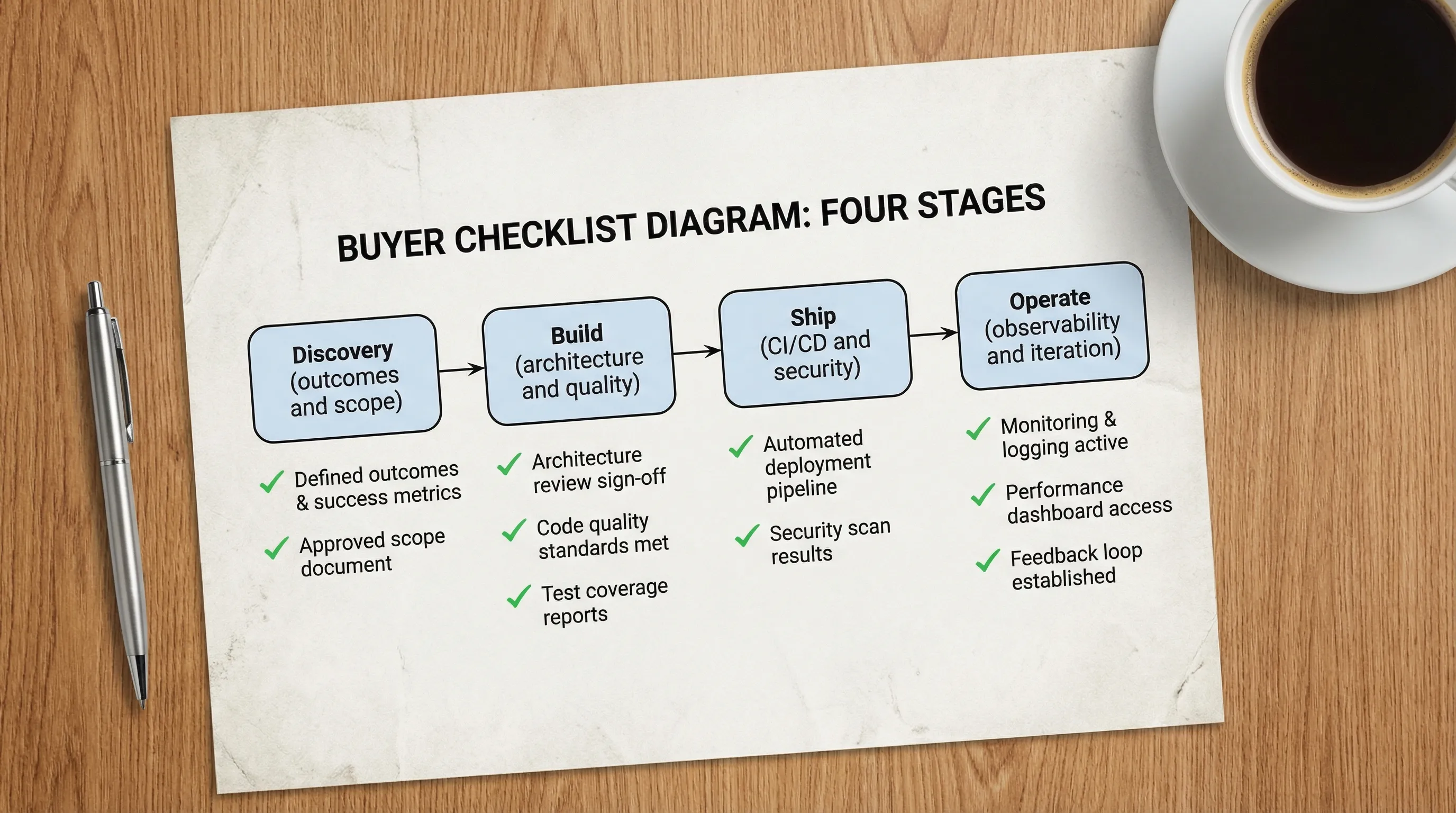

Buyer’s checklist: 12 checks that separate “builders” from “delivery partners”

Use the checks below as acceptance criteria in your selection process. You do not need every item on day one, but you should know which ones are in-scope, which are staged, and what “done” means.

1) Outcomes and success metrics are explicit (not implied)

A credible proposal starts with outcomes, constraints, and a definition of success that can be measured after launch.

Look for clarity on:

- Primary user journeys and the business outcome they support

- Non-functional requirements (NFRs) like latency, availability, and data retention

- A measurement plan (what will be instrumented, when, and why)

If your vendor cannot articulate success metrics, they will optimize for output (tickets closed) instead of outcomes (value delivered). Wolf-Tech’s approach to kickoff artifacts can help you set the bar: Software project kickoff: scope, risks, and success metrics.

2) Scope is a testable slice, not a wishlist

For application development services, the safest early scope is a thin vertical slice: one end-to-end workflow that touches UI, API, data, auth, and deployment.

In proposals, prefer:

- A slice-based plan with exit criteria (what proves the slice is real)

- Explicit “not now” decisions (what is deferred, and what risks that creates)

- A backlog organized by outcomes and risk, not by UI screens alone

If you want a concrete model for slicing, start here: Build a web application: step-by-step checklist.

3) UX and architecture are aligned early (the “handshake”)

Misalignment between UX expectations and system reality is a common cause of rewrites. Buyers should require an explicit alignment loop between design and engineering.

Ask how the vendor prevents:

- “Fast in Figma, slow in production” experiences

- Missing states (loading, errors, offline, permissions, retries)

- UX that assumes data consistency the system cannot guarantee

A high-signal indicator is whether they produce concrete handshake artifacts (journeys, state models, API sketches, performance budgets). See: Web application designing: UX to architecture handshake.

4) Architecture decisions are documented and reversible where possible

You are not buying “microservices” or “serverless.” You are buying fit-for-purpose boundaries and change safety.

Require:

- An architecture baseline (even if lightweight)

- Architecture Decision Records (ADRs) for major trade-offs

- A plan for evolutionary change (how the system will grow without a rewrite)

If you want to understand what a serious review covers, use: What a tech expert reviews in your architecture.

5) The tech stack is chosen with a time horizon, not trends

Stack decisions should be justified by constraints: team skill, expected scale, security posture, and operability. A vendor should be able to explain what they would not choose and why.

Good signs:

- A scorecard approach tied to NFRs and delivery realities

- A validation plan using a thin slice (not a months-long “platform phase”)

Related reading: Apps technologies: choosing the right stack for your use case.

6) Code quality is enforced by automation (not heroics)

Buyers should ask for the quality system, not just “we do code reviews.” The core question is: how do they prevent defects and regressions as velocity increases?

Minimum expectations:

- Defined quality gates in CI (linting, tests, static analysis where relevant)

- PR review standards (size limits, ownership, change documentation)

- A testing strategy aligned to risk (unit, integration, end-to-end, contract)

For a pragmatic view of metrics that actually predict outcomes, see: Code quality metrics that matter.

7) Security is built into the delivery system

Security should be a set of repeatable practices and controls, not a one-time penetration test at the end.

Ask how they handle:

- Dependency risk and supply chain security

- Secrets management and environment separation

- Secure-by-default auth patterns, session handling, and authorization

If you want an external baseline to reference in contracts, the NIST Secure Software Development Framework (SSDF) is widely used for mapping practices to expectations.

8) Data model and integration contracts are treated as first-class

Integrations are where timelines slip. Buyers should require early proof for any system that depends on external APIs, legacy databases, or vendor platforms.

Strong vendors will propose:

- Contract-first API design (and versioning strategy)

- Migration approach for legacy data (including rollback and reconciliation)

- Rate limit and failure-mode handling for third-party APIs

This is also where build-vs-buy decisions often hide. If you are uncertain, this helps structure the decision: Custom software applications: build vs buy vs hybrid.

9) CI/CD, environments, and release strategy are included from day one

If “deployment” is an afterthought, you will pay for it later. Application development services should include a delivery system that supports safe, frequent releases.

Require clarity on:

- Branching strategy and release cadence

- Environment strategy (dev, staging, prod, previews if relevant)

- Rollback and progressive delivery approach (feature flags, canaries)

A solid baseline is described here: CI/CD technology: build, test, deploy faster.

10) Observability and SLOs are part of “done”

A production app without telemetry is a support nightmare. Buyers should ask: how will we know it is healthy, and how will we debug issues fast?

Look for:

- Logging, metrics, tracing (at least a plan and initial implementation)

- Error monitoring and alert routing

- Service Level Objectives (SLOs) tied to user experience

If you want a delivery metric lens that correlates with performance and reliability outcomes, DORA metrics are a useful reference point (deploy frequency, lead time, change failure rate, and time to restore service). The Google Cloud DORA research is a good starting point.

11) Performance and cost are managed proactively

Buyers should not accept “we’ll optimize later” without a plan. Performance and cloud cost are product features, and they should be budgeted.

Ask for:

- Performance budgets (for example, p95 latency targets, Core Web Vitals where applicable)

- Load and capacity assumptions (and how they will be validated)

- Cost visibility and guardrails (especially for cloud and third-party services)

For a practical, measurement-driven approach to performance work, see: Optimize the code: high-impact fixes beyond micro-optimizing.

12) Ownership, access, and handover are contractually unambiguous

The fastest way to end up locked in is unclear ownership of repos, cloud accounts, domains, CI secrets, and operational documentation.

Before you sign, confirm:

- IP ownership and repo access (from day one)

- Documentation expectations (runbooks, architecture notes, onboarding)

- Post-launch responsibilities (warranty, SLAs, response times, escalation)

- AI usage policy and confidentiality rules (what can be sent to third-party models)

If you want a “default deliverables” baseline to compare vendors, this is a good reference: Custom software services: what you should get by default.

A practical vendor comparison table you can reuse

Use this table to evaluate proposals and sales calls. The goal is not to force identical answers, it is to force evidence.

| Checklist area | What “good” looks like | Proof to request | Common red flag |

|---|---|---|---|

| Outcomes and metrics | Measurable success criteria tied to users and business | 1-page scope + metrics plan | Only feature lists |

| Scope and slicing | Thin vertical slice early, clear exit criteria | Slice plan + milestone gates | “Big bang MVP” with vague timelines |

| Architecture baseline | Documented boundaries, ADRs, reversibility | Architecture diagram + ADR examples | “We’ll decide later” for core choices |

| Quality system | CI gates + risk-based testing strategy | CI pipeline outline + test plan | Manual testing as the primary safety net |

| Security by design | Repeatable controls, not a final audit | Threat model outline + dependency policy | Security postponed to pre-launch |

| Delivery and releases | Automated deployments, rollback strategy | Release playbook | Manual deployments, no rollback plan |

| Operability | Logs/metrics/traces + SLOs | Monitoring plan + alerting rules | “We can add monitoring later” |

| Ownership and handover | You own repos, infra access, docs | Contract clauses + sample runbook | Restricted access or unclear IP terms |

Questions to ask in the final round (high signal, hard to bluff)

These questions tend to separate polished sales from real operational maturity:

- “Show us a recent ADR you wrote, and explain what changed your mind.”

- “What does your Definition of Done include for production readiness?”

- “Walk us through a deployment that went wrong and how you rolled back.”

- “How do you prevent secrets from leaking into logs, tickets, or AI tools?”

- “If we replace you in six months, what artifacts will make that easy?”

If answers stay abstract, ask for examples. A serious team will be comfortable showing anonymized artifacts.

Frequently Asked Questions

What are application development services, exactly? Application development services usually include discovery, UX/design collaboration, architecture, implementation, QA/testing, CI/CD, security, cloud/DevOps, and post-launch support. The key is whether those are delivered as a coherent system, not as separate add-ons.

How do I compare fixed-price vs time-and-materials proposals? Compare them on risk controls and clarity. Fixed-price can work when scope and acceptance criteria are testable. Time-and-materials can work when paired with strong governance, frequent demos, and clear exit criteria per milestone.

What deliverables should I require before the first major build phase? At minimum: an outcome-focused scope, a thin vertical slice plan, an architecture baseline (even lightweight), and a delivery plan that includes CI/CD and security expectations.

How can I avoid vendor lock-in when outsourcing app development? Make ownership explicit (repos, cloud accounts, domains), require documentation and runbooks, avoid proprietary black boxes without an exit plan, and keep architecture decisions documented via ADRs.

When should I run a pilot before committing? If the project has meaningful integration risk, regulatory exposure, legacy complexity, or if you are unsure about the vendor’s delivery maturity, a short paid pilot that ships a thin slice is often the fastest way to validate fit.

Need a second set of eyes on a proposal or checklist?

Wolf-Tech provides full-stack application development services plus architecture and code quality consulting, with 18+ years of experience helping teams build, optimize, and scale production software.

If you want, share your current proposal(s) or a short project brief, and we can help you:

- Turn your requirements into testable vendor acceptance criteria

- Identify hidden risks (security, operability, integrations, delivery)

- Define a thin-slice plan that proves feasibility early

Start here: Wolf-Tech.